We’re excited to announce two new capabilities in Amazon SageMaker Studio that may speed up iterative growth for machine studying (ML) practitioners: Native Mode and Docker assist. ML mannequin growth typically entails gradual iteration cycles as builders change between coding, coaching, and deployment. Every step requires ready for distant compute assets to start out up, which delays validating implementations and getting suggestions on modifications.

With Native Mode, builders can now prepare and check fashions, debug code, and validate end-to-end pipelines instantly on their SageMaker Studio pocket book occasion with out the necessity for spinning up distant compute assets. This reduces the iteration cycle from minutes right down to seconds, boosting developer productiveness. Docker assist in SageMaker Studio notebooks allows builders to effortlessly construct Docker containers and entry pre-built containers, offering a constant growth setting throughout the crew and avoiding time-consuming setup and dependency administration.

Native Mode and Docker assist supply a streamlined workflow for validating code modifications and prototyping fashions utilizing native containers working on a SageMaker Studio pocket book

occasion. On this put up, we information you thru organising Native Mode in SageMaker Studio, working a pattern coaching job, and deploying the mannequin on an Amazon SageMaker endpoint from a SageMaker Studio pocket book.

SageMaker Studio Native Mode

SageMaker Studio introduces Native Mode, enabling you to run SageMaker coaching, inference, batch remodel, and processing jobs instantly in your JupyterLab, Code Editor, or SageMaker Studio Basic pocket book cases with out requiring distant compute assets. Advantages of utilizing Native Mode embody:

- On the spot validation and testing of workflows proper inside built-in growth environments (IDEs)

- Quicker iteration via native runs for smaller-scale jobs to examine outputs and establish points early

- Improved growth and debugging effectivity by eliminating the watch for distant coaching jobs

- Rapid suggestions on code modifications earlier than working full jobs within the cloud

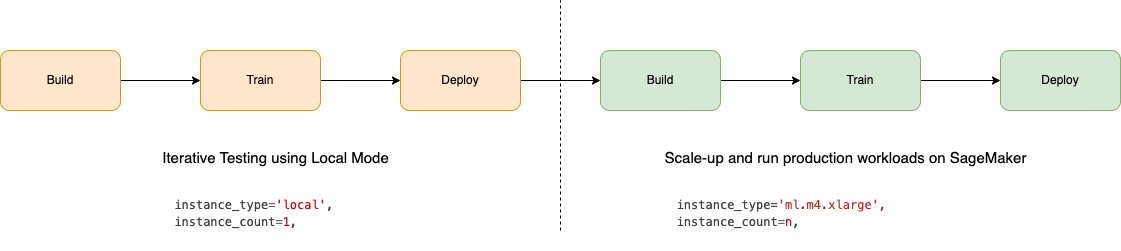

The next determine illustrates the workflow utilizing Native Mode on SageMaker.

To make use of Native Mode, set instance_type="native" when working SageMaker Python SDK jobs resembling coaching and inference. This can run them on the cases utilized by your SageMaker Studio IDEs as a substitute of provisioning cloud assets.

Though sure capabilities resembling distributed coaching are solely accessible within the cloud, Native Mode removes the necessity to change contexts for fast iterations. Whenever you’re able to make the most of the complete energy and scale of SageMaker, you possibly can seamlessly run your workflow within the cloud.

Docker assist in SageMaker Studio

SageMaker Studio now additionally allows constructing and working Docker containers regionally in your SageMaker Studio pocket book occasion. This new function permits you to construct and validate Docker photographs in SageMaker Studio earlier than utilizing them for SageMaker coaching and inference.

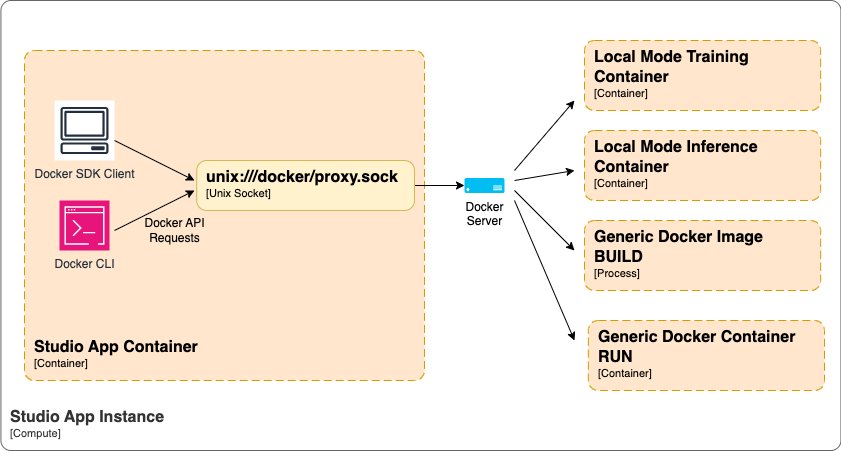

The next diagram illustrates the high-level Docker orchestration structure inside SageMaker Studio.

With Docker assist in SageMaker Studio, you possibly can:

- Construct Docker containers with built-in fashions and dependencies instantly inside SageMaker Studio

- Remove the necessity for exterior Docker construct processes to simplify picture creation

- Run containers regionally to validate performance earlier than deploying fashions to manufacturing

- Reuse native containers when deploying to SageMaker for coaching and internet hosting

Though some superior Docker capabilities like multi-container and customized networks will not be supported as of this writing, the core construct and run performance is offered to speed up creating containers for deliver your individual container (BYOC) workflows.

Stipulations

To make use of Native Mode in SageMaker Studio purposes, it’s essential to full the next conditions:

- For pulling photographs from Amazon Elastic Container Registry (Amazon ECR), the account internet hosting the ECR picture should present entry permission to the consumer’s Identity and Access Management (IAM) position. The area’s position should additionally enable Amazon ECR entry.

- To allow Native Mode and Docker capabilities, it’s essential to set the

EnableDockerAccessparameter to true for the area’sDockerSettingsutilizing the AWS Command Line Interface (AWS CLI). This permits customers within the area to make use of Native Mode and Docker options. By default, Native Mode and Docker are disabled in SageMaker Studio. Any current SageMaker Studio apps will should be restarted for the Docker service replace to take impact. The next is an instance AWS CLI command for updating a SageMaker Studio area:

- You have to replace the SageMaker IAM position so as to have the ability to push Docker images to Amazon ECR:

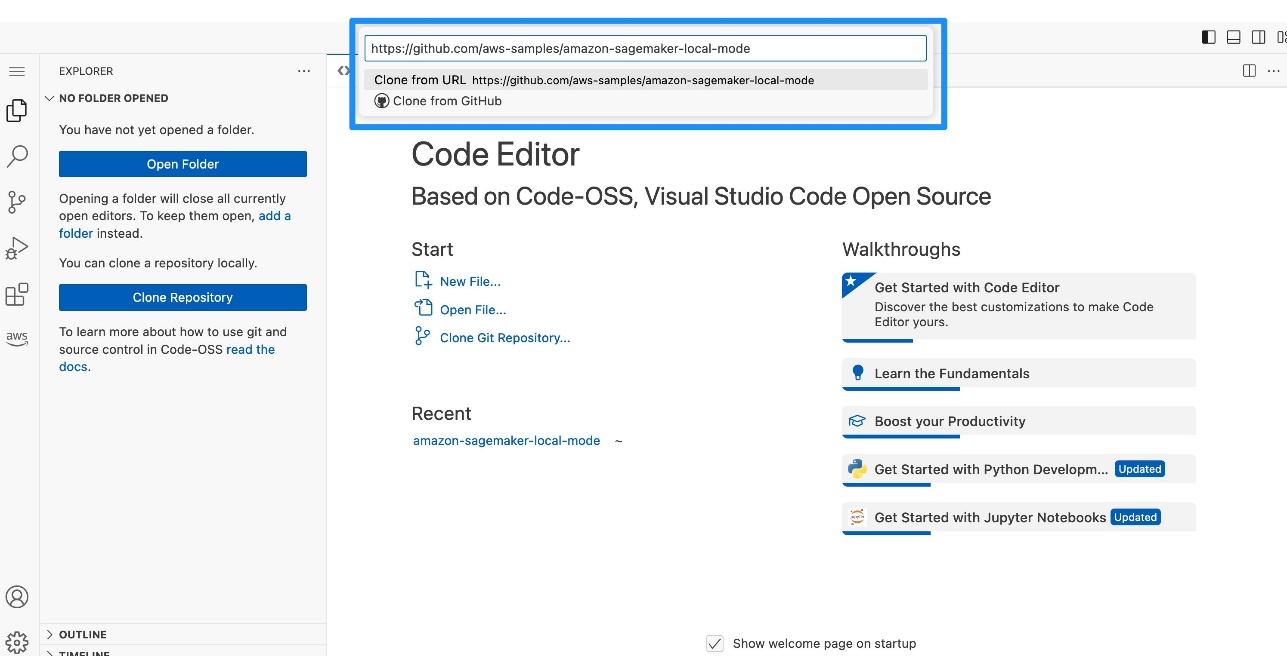

Run Python recordsdata in SageMaker Studio areas utilizing Native Mode

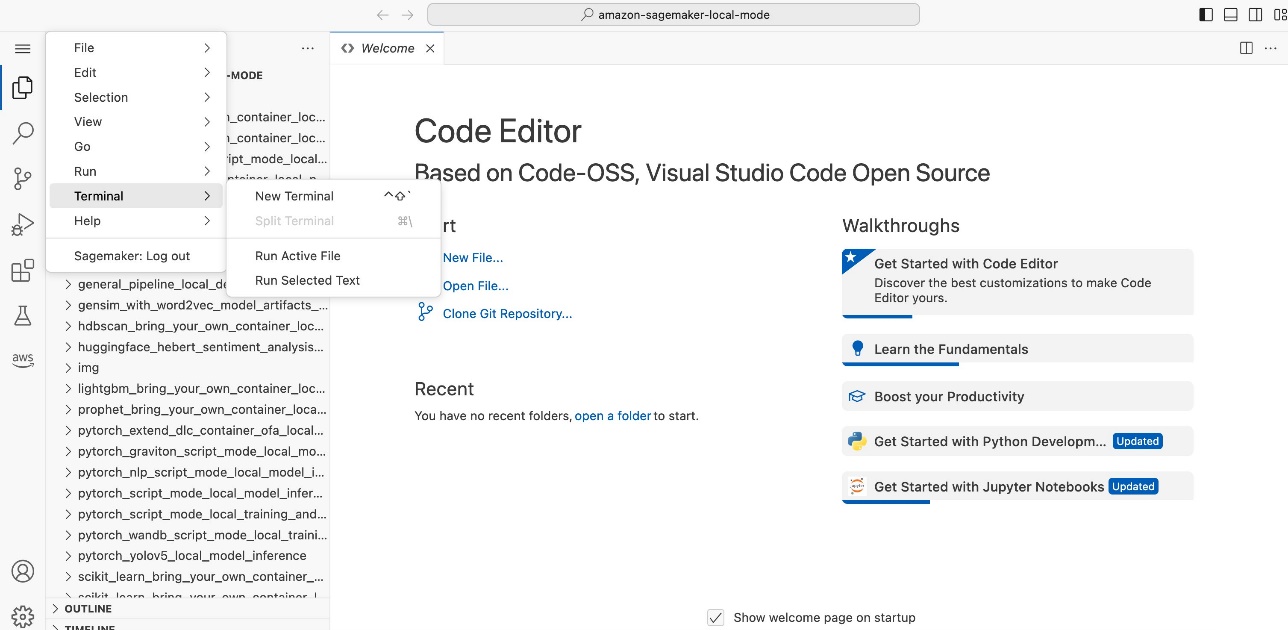

SageMaker Studio JupyterLab and Code Editor (primarily based on Code-OSS, Visual Studio Code – Open Source), extends SageMaker Studio so you possibly can write, check, debug, and run your analytics and ML code utilizing the favored light-weight IDE. For extra particulars on how you can get began with SageMaker Studio IDEs, confer with Boost productivity on Amazon SageMaker Studio: Introducing JupyterLab Spaces and generative AI tools and New – Code Editor, based on Code-OSS VS Code Open Source now available in Amazon SageMaker Studio. Full the next steps:

- Create a brand new terminal.

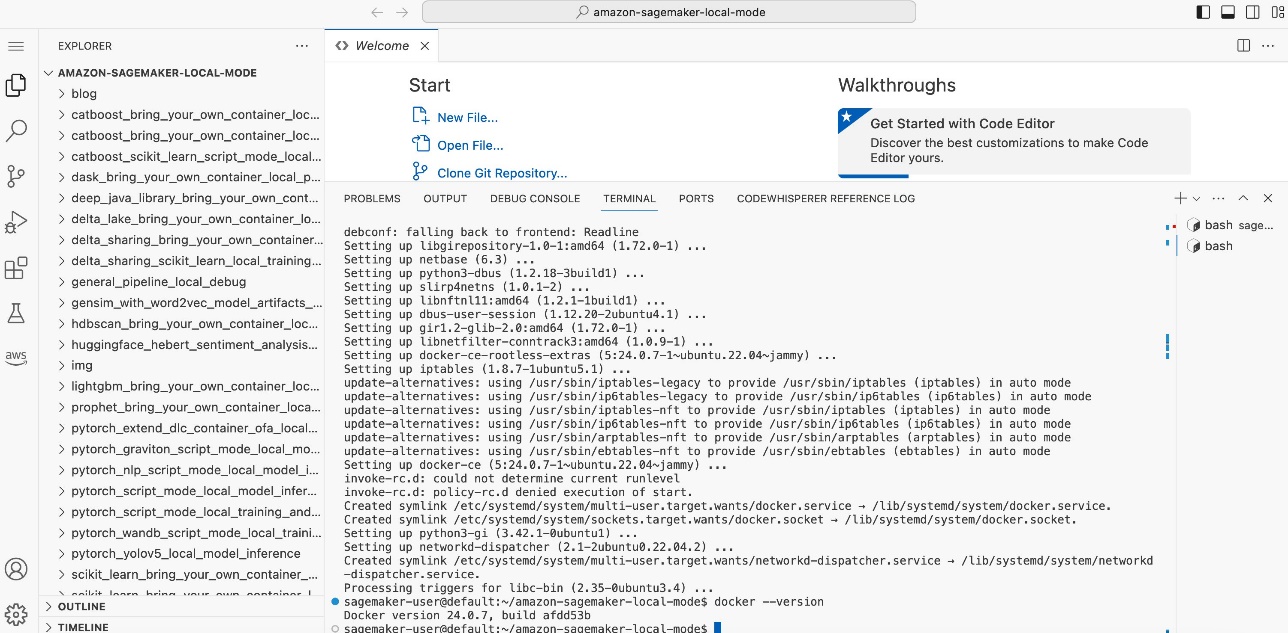

- Set up the Docker CLI and Docker Compose plugin following the directions within the following GitHub repo. If chained instructions fail, run the instructions one after the other.

It’s essential to replace the SageMaker SDK to the newest model.

It’s essential to replace the SageMaker SDK to the newest model.

- Run

pip set up sagemaker -Uqwithin the terminal.

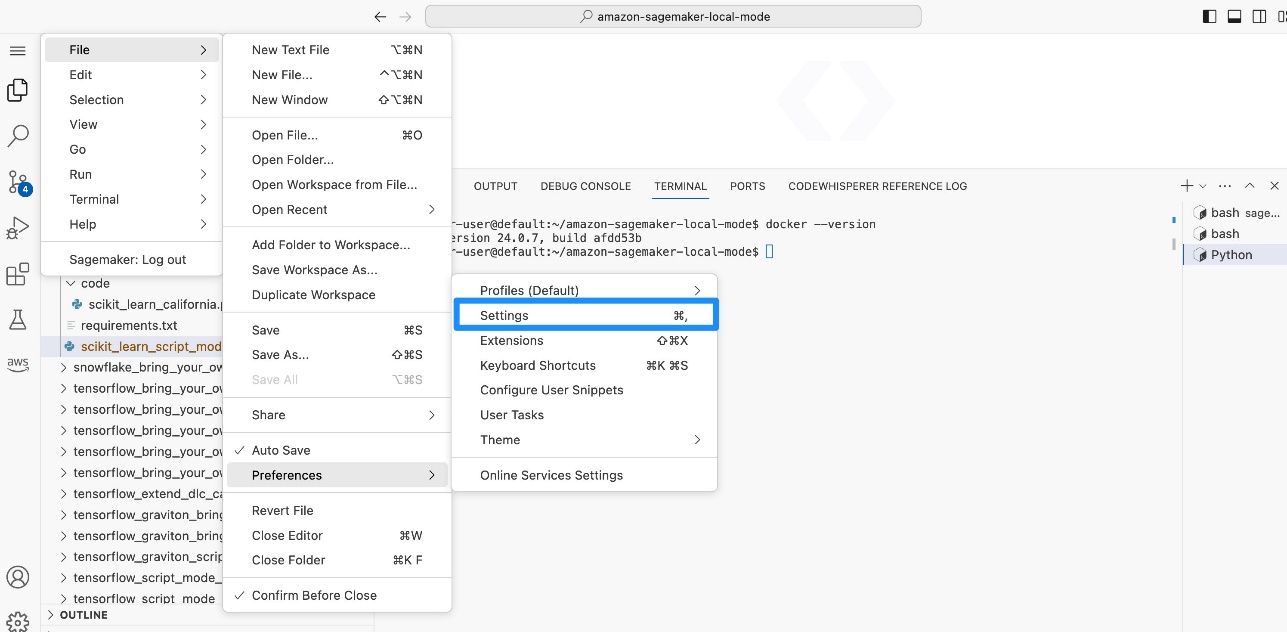

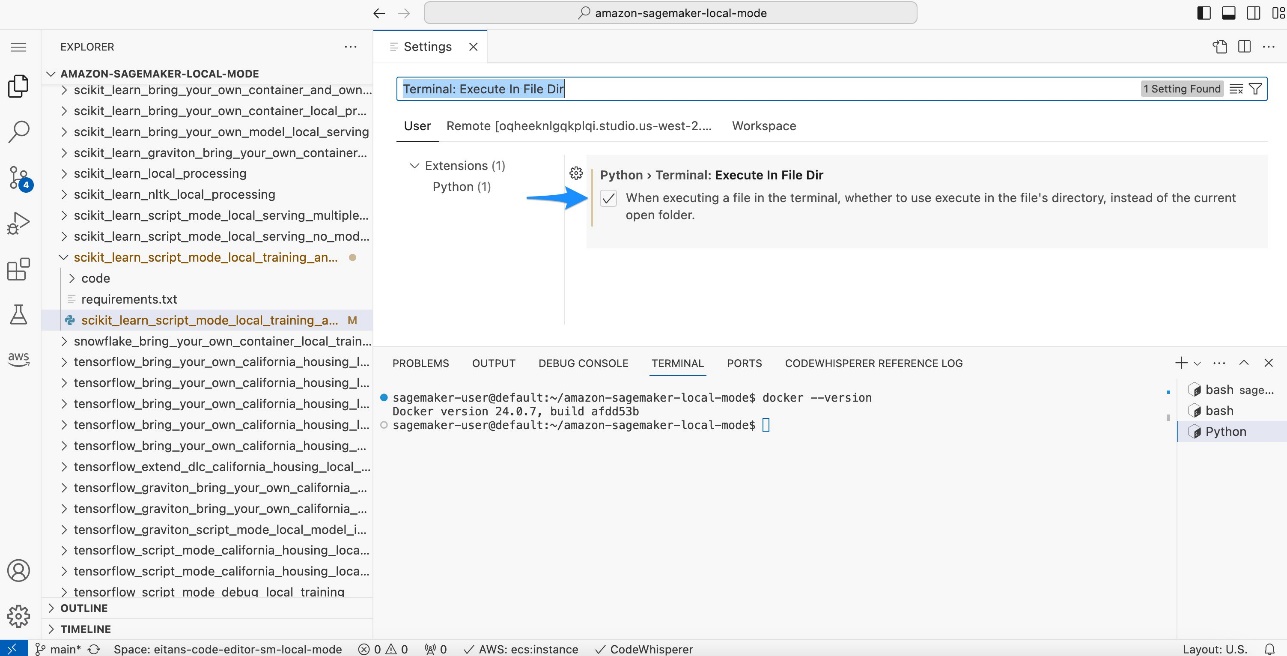

For Code Editor solely, you have to set the Python setting to run within the present terminal.

- In Code Editor, on the File menu¸ select Preferences and Settings.

- Seek for and choose Terminal: Execute in File Dir.

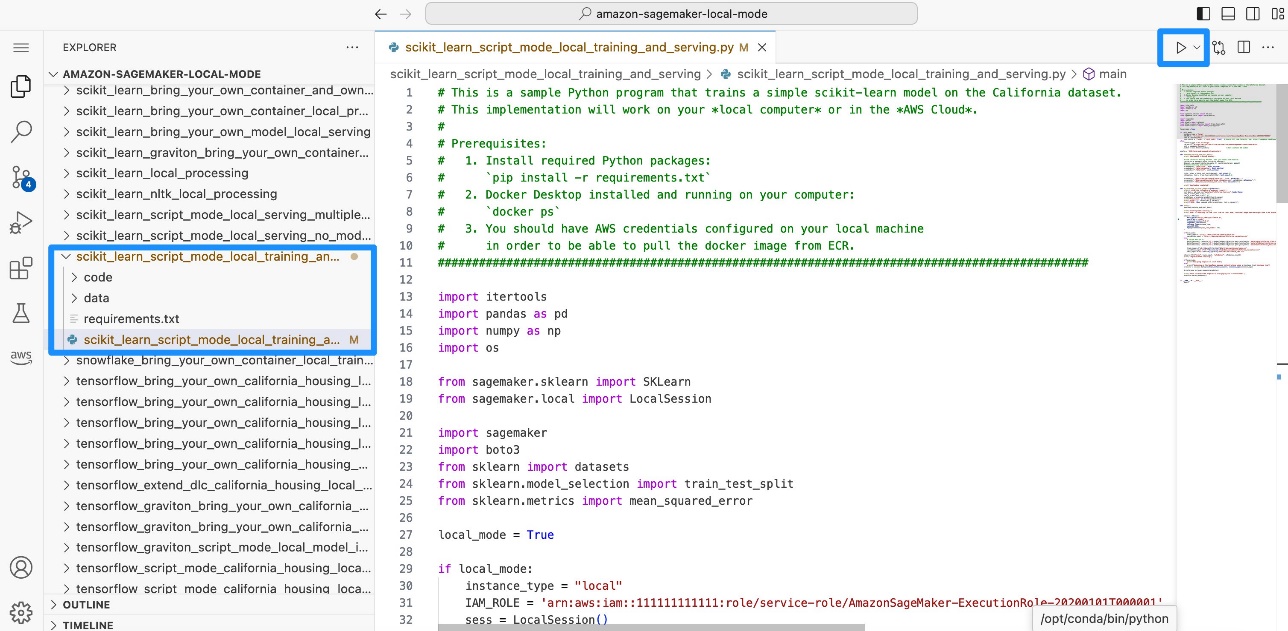

- In Code Editor or JupyterLab, open the

scikit_learn_script_mode_local_training_and_servingfolder and run thescikit_learn_script_mode_local_training_and_serving.pyfile.

You may run the script by selecting Run in Code Editor or utilizing the CLI in a JupyterLab terminal.

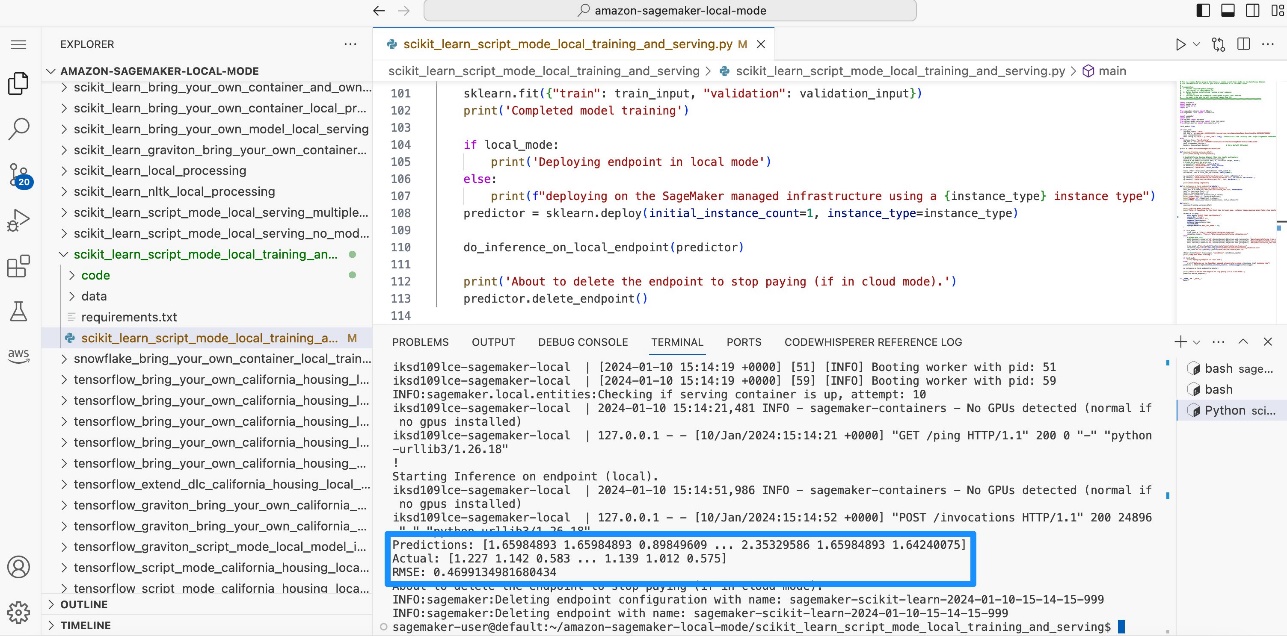

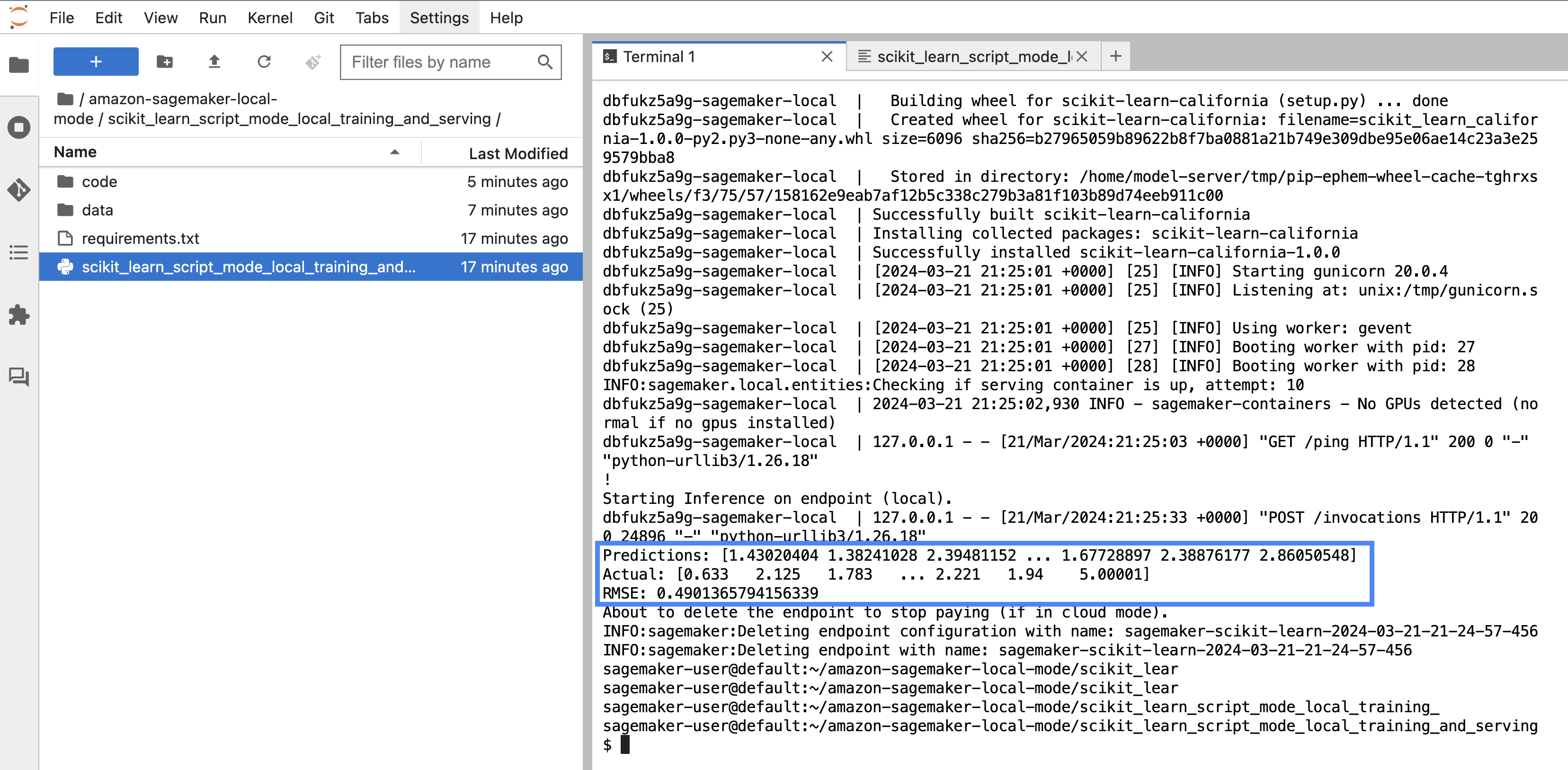

It is possible for you to to see how the mannequin is educated regionally. Then you definately deploy the mannequin to a SageMaker endpoint regionally, and calculate the basis imply sq. error (

It is possible for you to to see how the mannequin is educated regionally. Then you definately deploy the mannequin to a SageMaker endpoint regionally, and calculate the basis imply sq. error (RMSE).

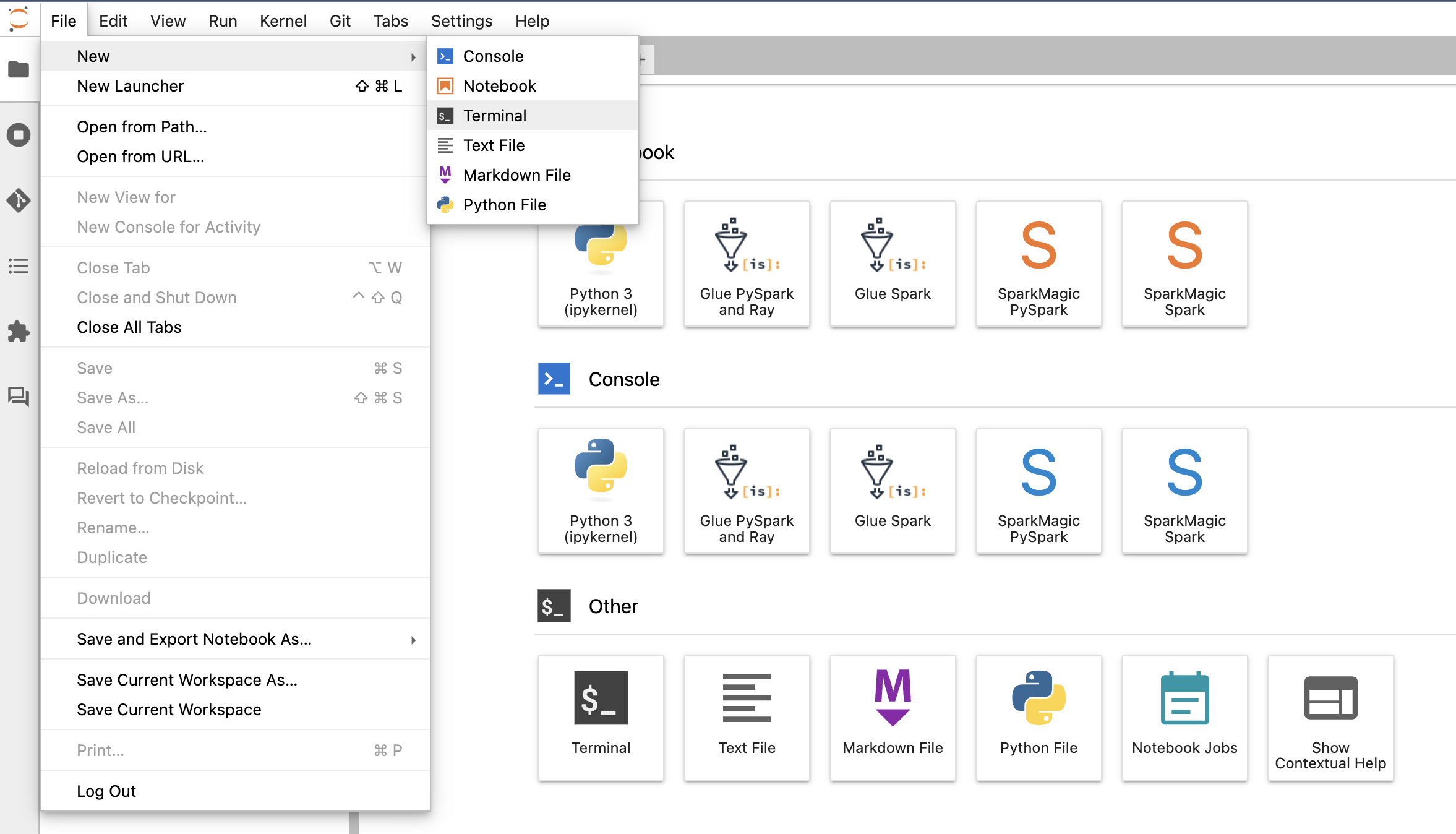

Simulate coaching and inference in SageMaker Studio Basic utilizing Native Mode

You can too use a pocket book in SageMaker Studio Basic to run a small-scale coaching job on CIFAR10 utilizing Native Mode, deploy the mannequin regionally, and carry out inference.

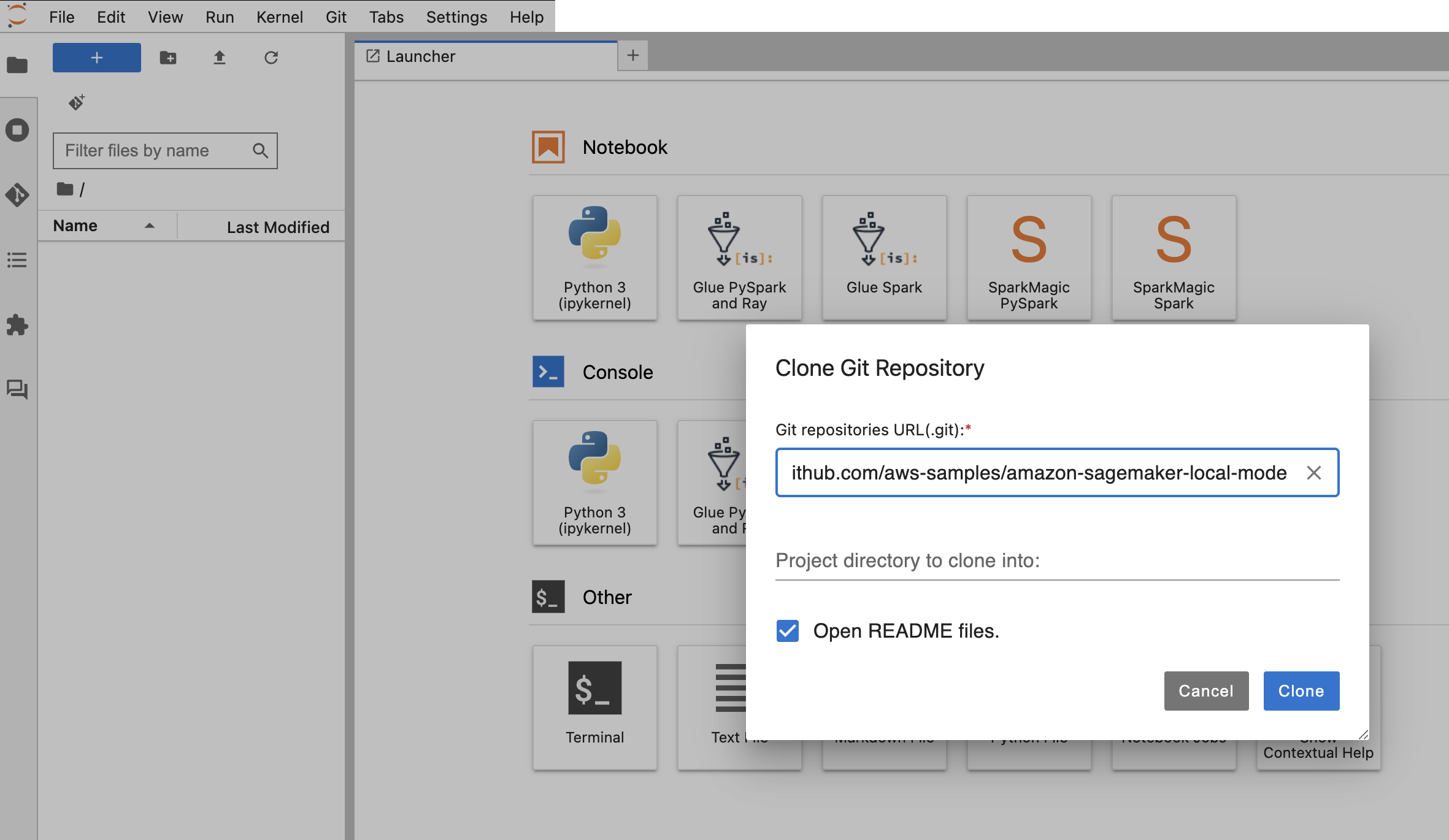

Arrange your pocket book

To arrange the pocket book, full the next steps:

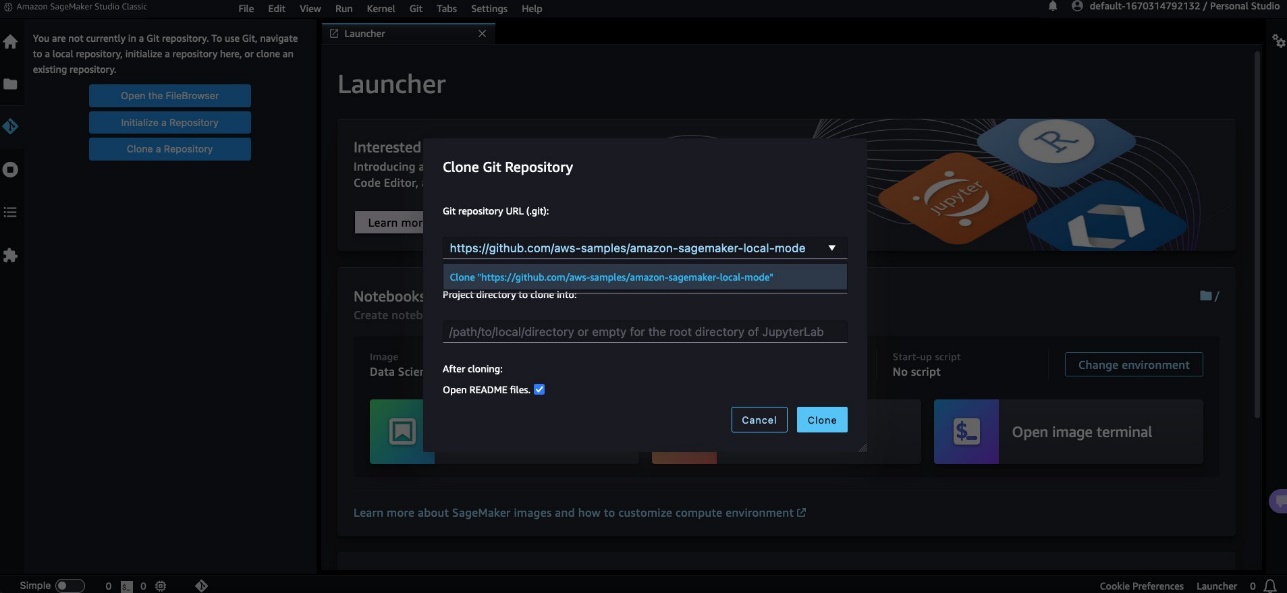

- Open SageMaker Studio Basic and clone the next GitHub repo.

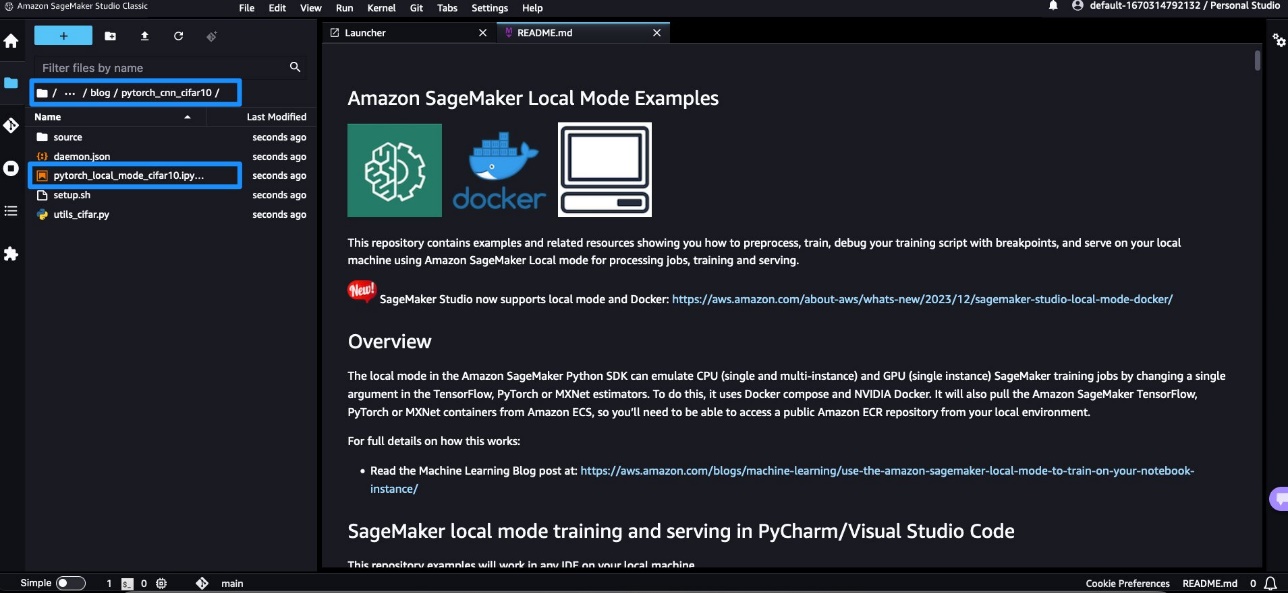

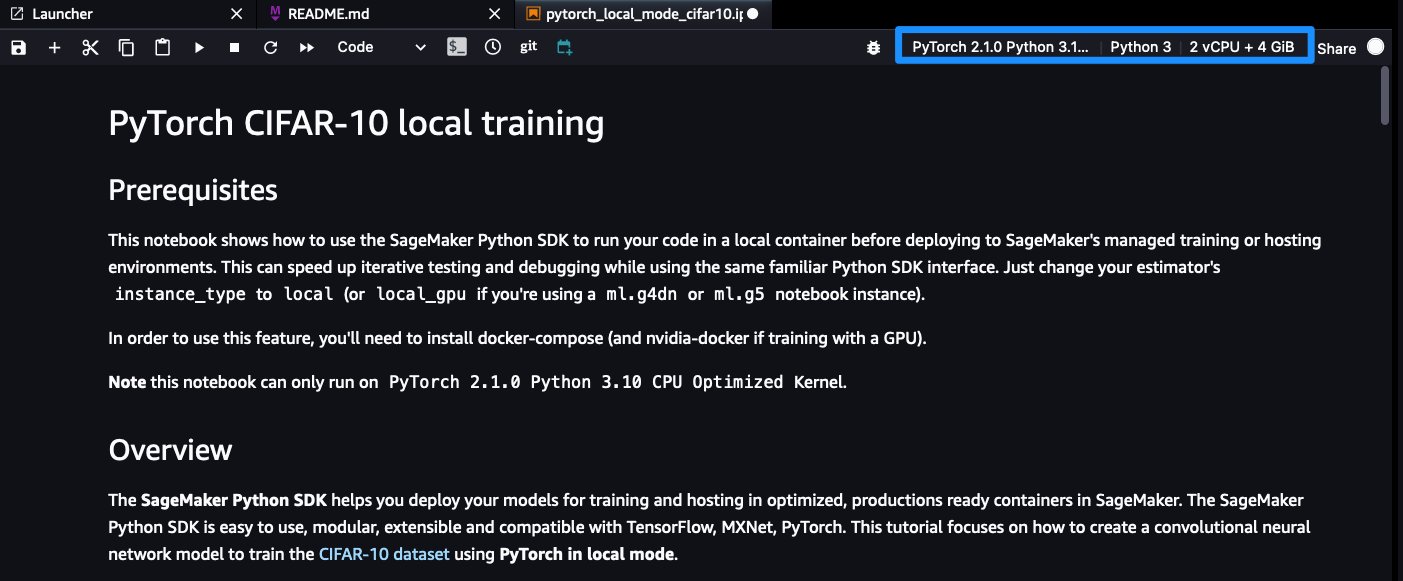

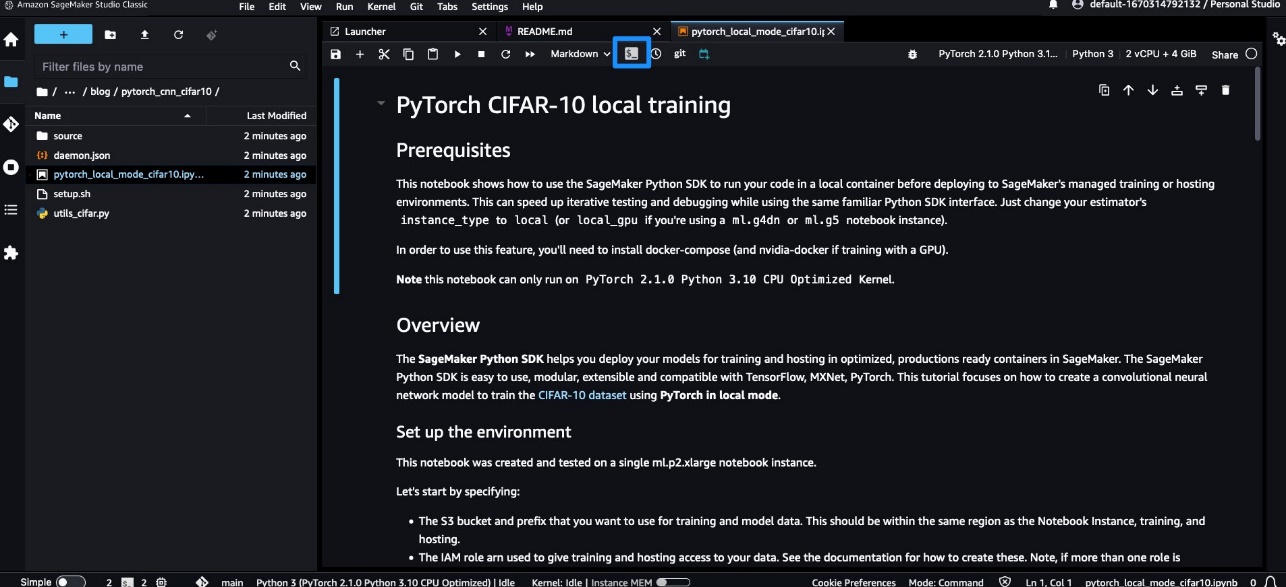

- Open the

pytorch_local_mode_cifar10.ipynb pocket book in weblog/pytorch_cnn_cifar10.

- For Picture, select

PyTorch 2.1.0 Python 3.10 CPU Optimized.

Affirm that your pocket book reveals the proper occasion and kernel choice.

Affirm that your pocket book reveals the proper occasion and kernel choice.

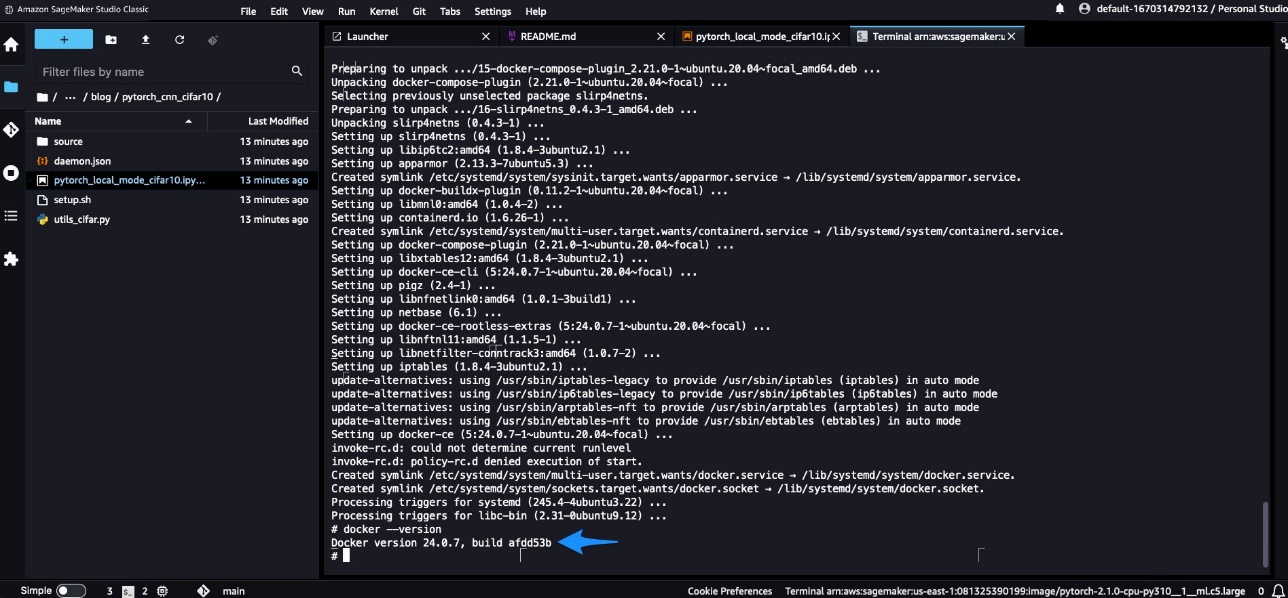

- Open a terminal by selecting Launch Terminal within the present SageMaker picture.

- Set up the Docker CLI and Docker Compose plugin following the directions within the following GitHub repo.

Since you’re utilizing Docker from SageMaker Studio Basic, take away sudo when working instructions as a result of the terminal already runs beneath superuser. For SageMaker Studio Basic, the set up instructions rely upon the SageMaker Studio app picture OS. For instance, DLC-based framework photographs are Ubuntu primarily based, by which the next directions would work. Nevertheless, for a Debian-based picture like DataScience Pictures, it’s essential to observe the directions within the following GitHub repo. If chained instructions fail, run the instructions one after the other. You need to see the Docker model displayed.

- Depart the terminal window open, return to the pocket book, and begin working it cell by cell.

Ensure that to run the cell with pip set up -U sagemaker so that you’re utilizing the newest model of the SageMaker Python SDK.

Native coaching

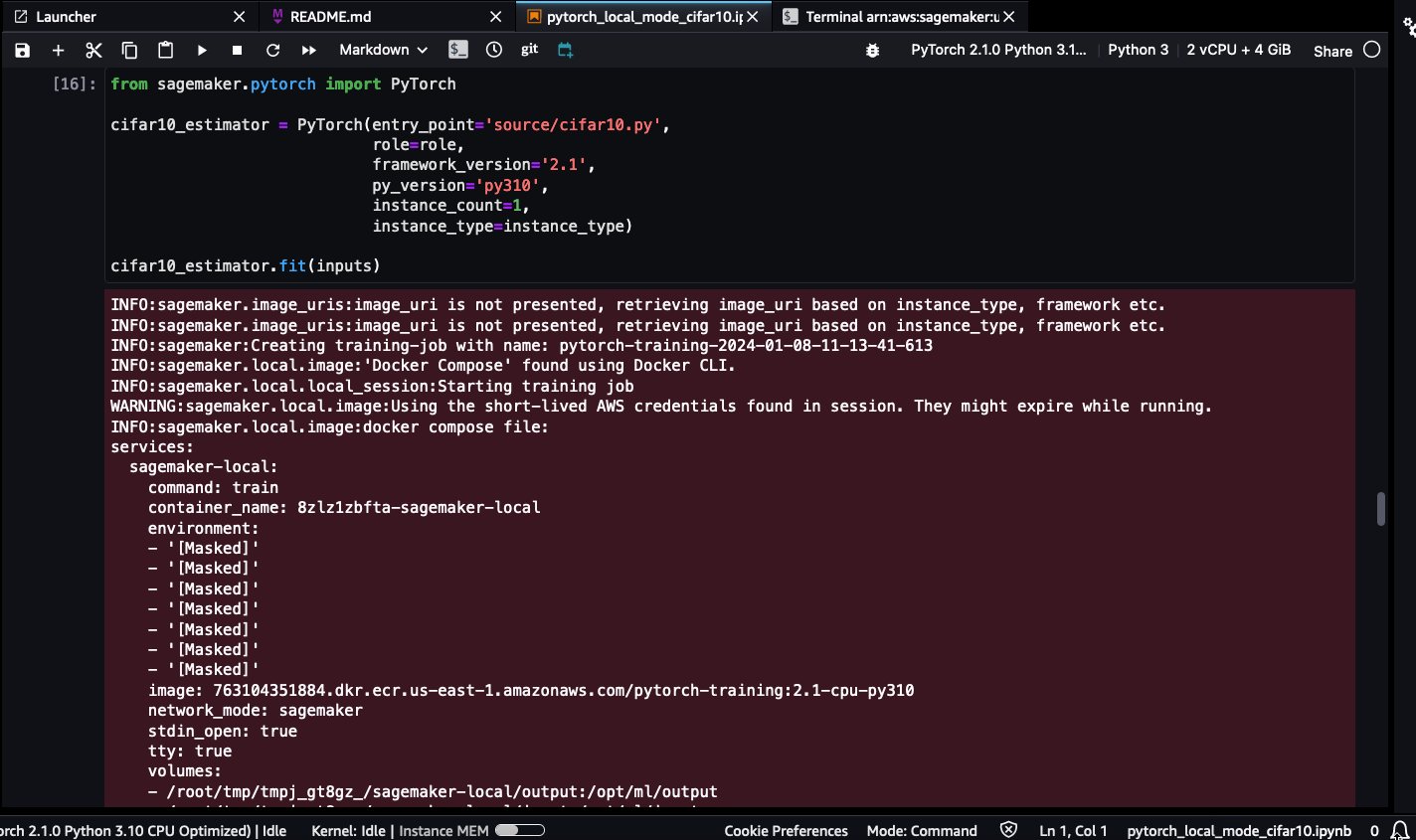

Whenever you begin working the native SageMaker coaching job, you will note the next log strains:

This means that the coaching was working regionally utilizing Docker.

Be affected person whereas the pytorch-training:2.1-cpu-py310 Docker picture is pulled. On account of its massive dimension (5.2 GB), it may take a couple of minutes.

Docker photographs will likely be saved within the SageMaker Studio app occasion’s root quantity, which isn’t accessible to end-users. The one technique to entry and work together with Docker photographs is by way of the uncovered Docker API operations.

From a consumer confidentiality standpoint, the SageMaker Studio platform by no means accesses or shops user-specific photographs.

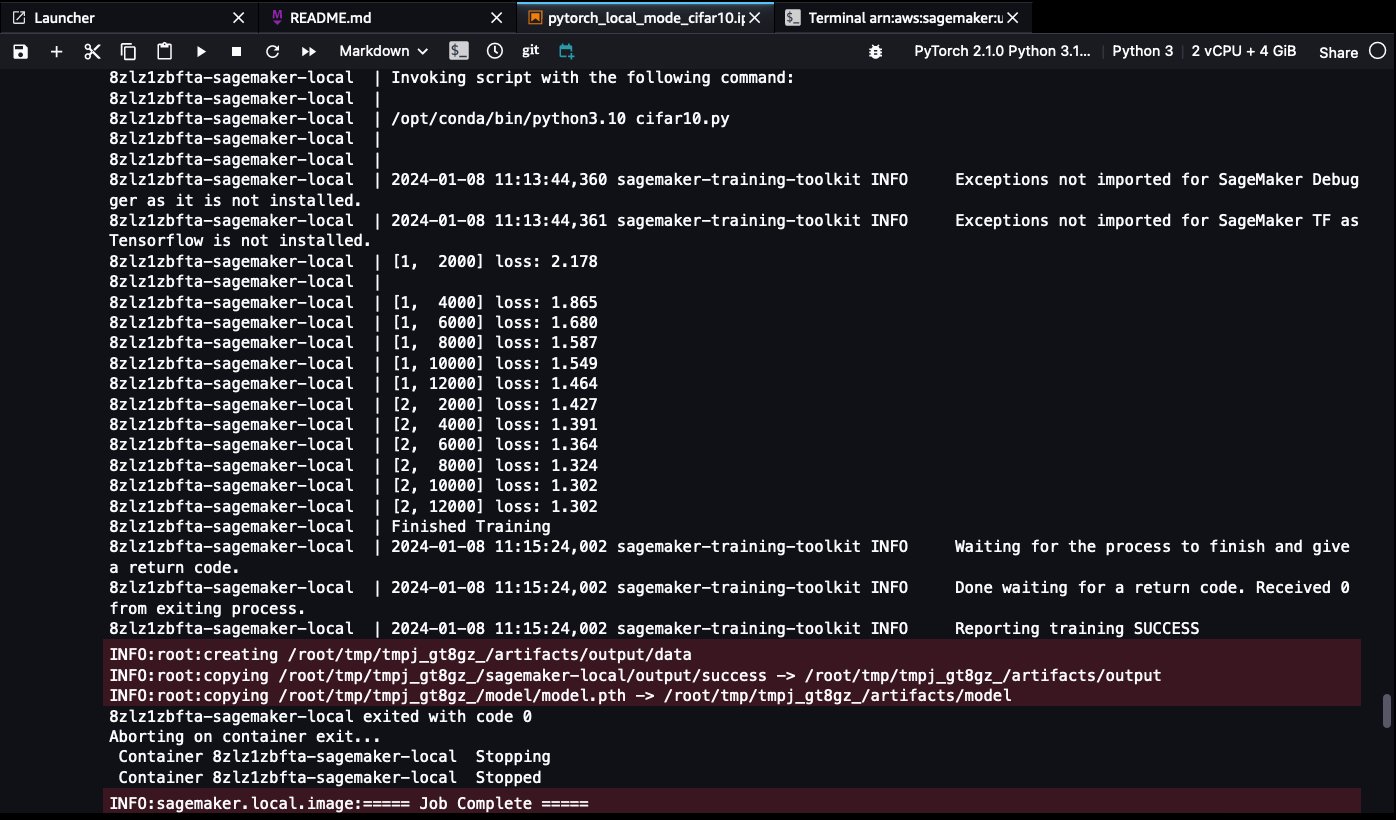

When the coaching is full, you’ll have the ability to see the next success log strains:

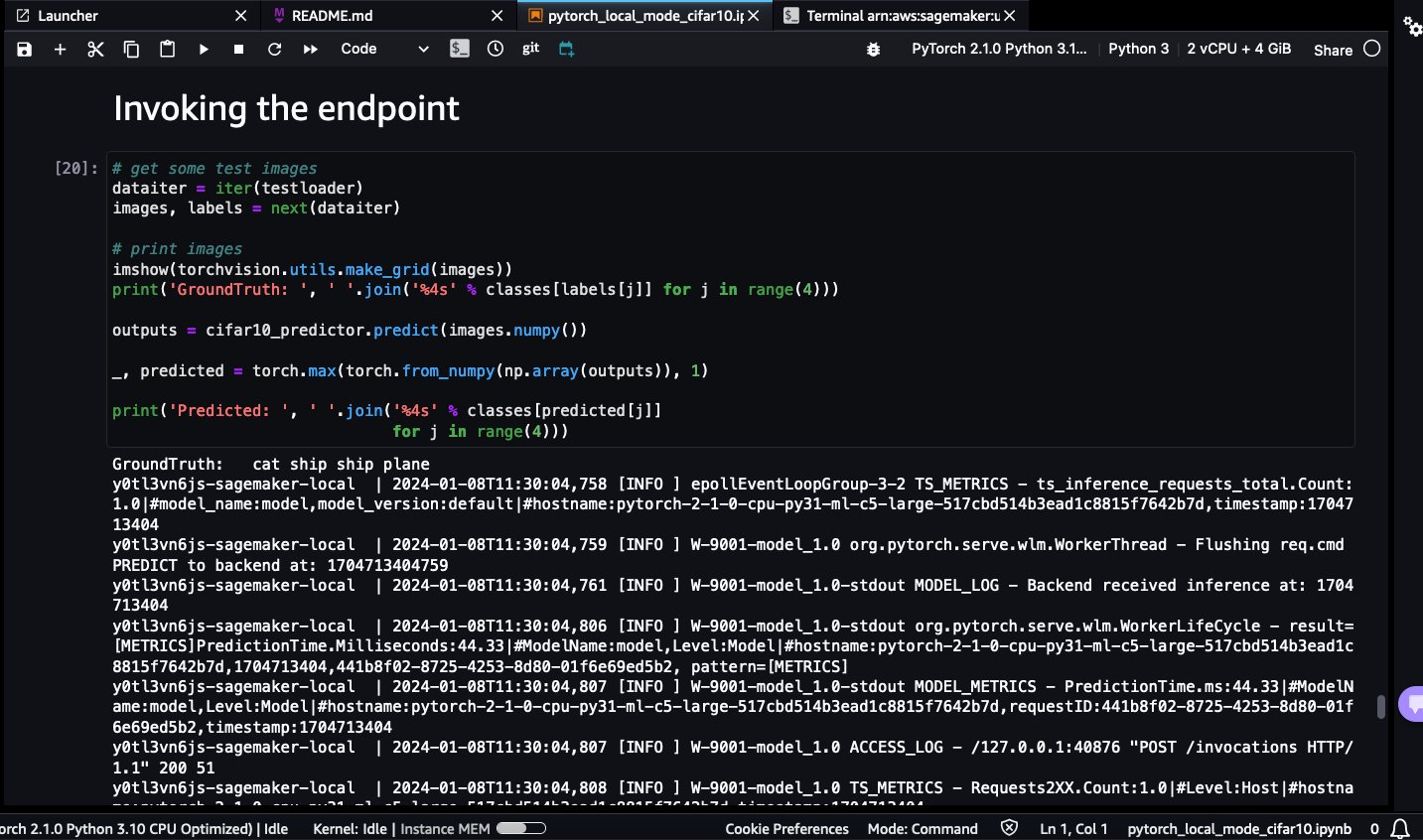

Native inference

Full the next steps:

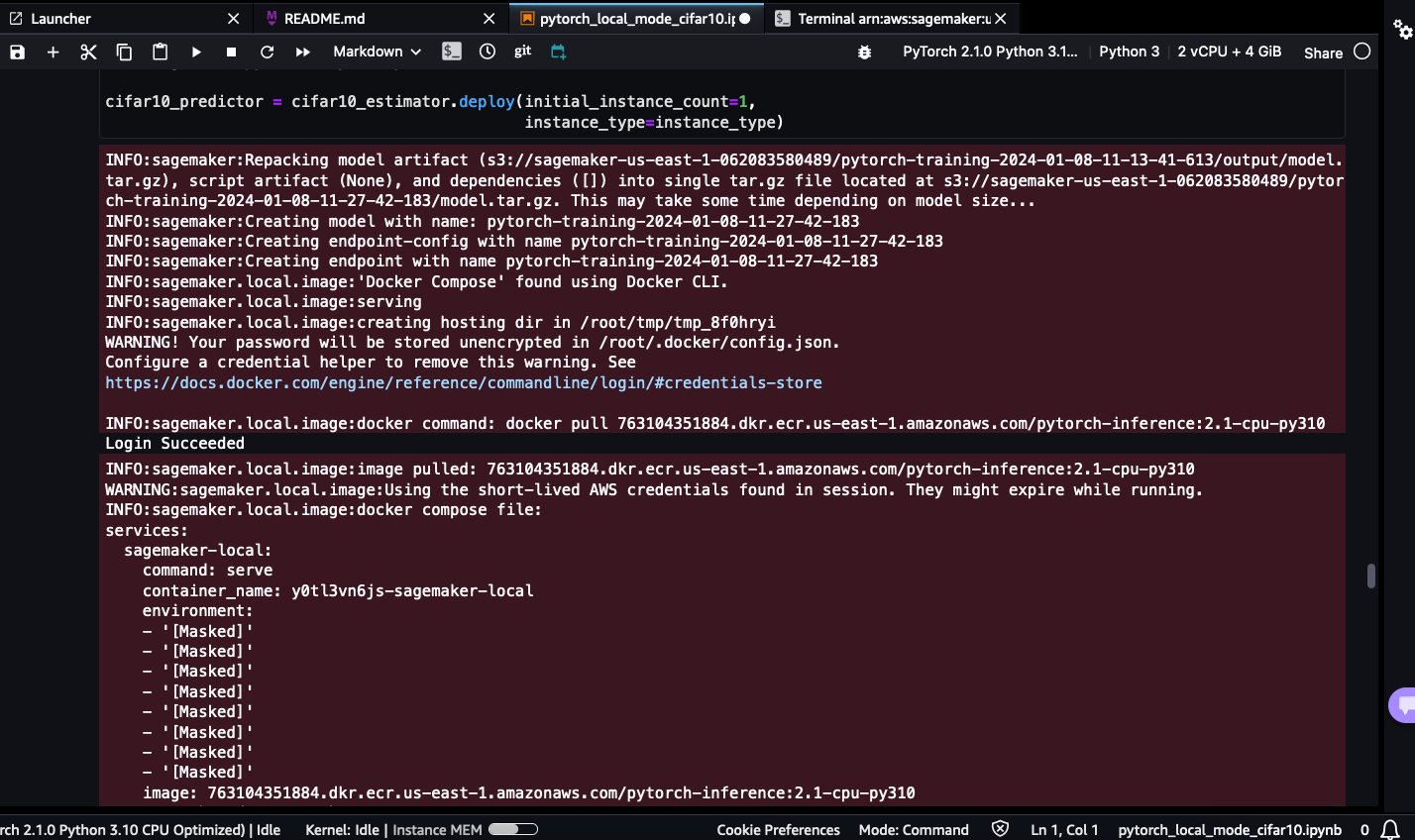

- Deploy the SageMaker endpoint utilizing SageMaker Native Mode.

Be affected person whereas the pytorch-inference:2.1-cpu-py310 Docker picture is pulled. On account of its massive dimension (4.32 GB), it may take a couple of minutes.

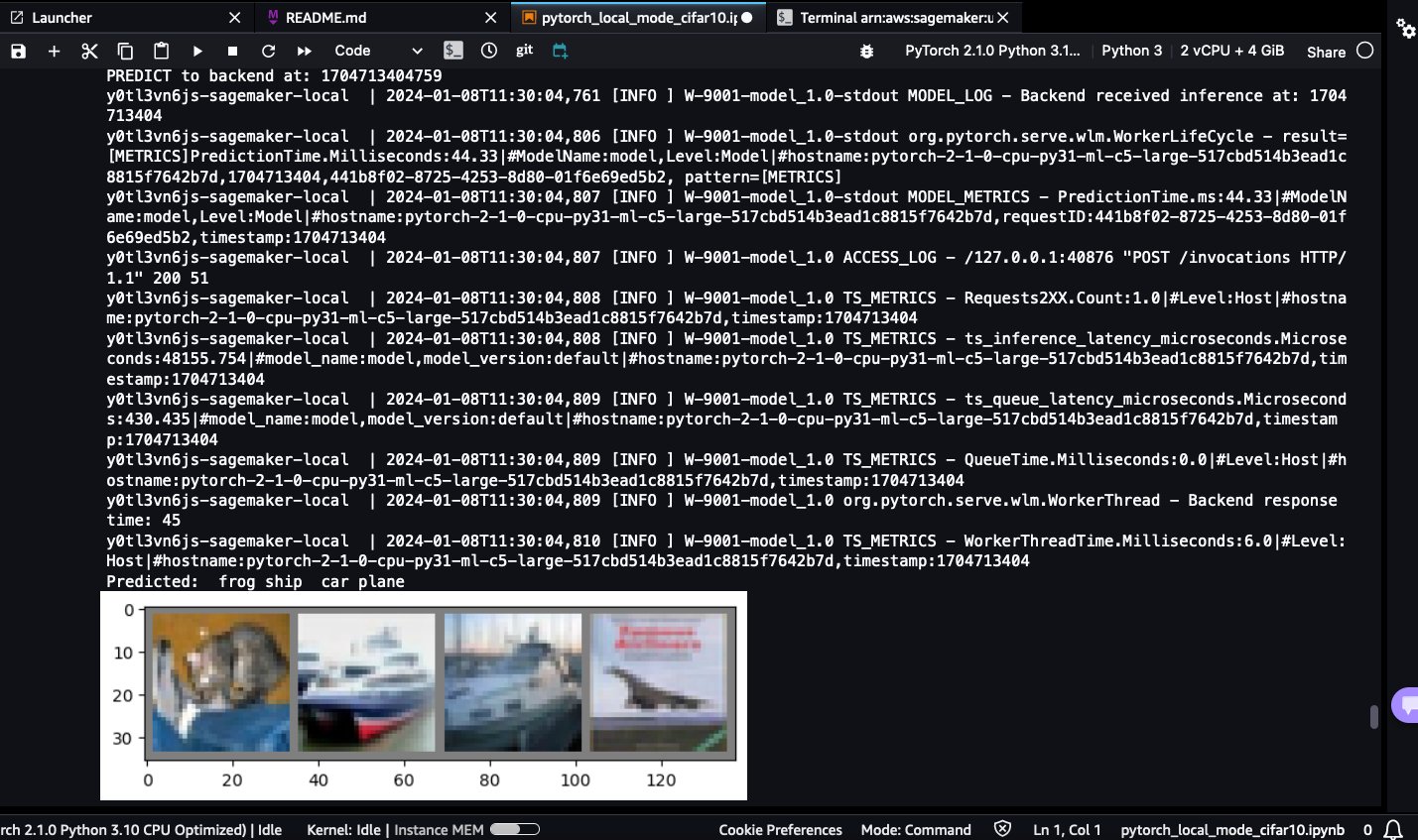

- Invoke the SageMaker endpoint deployed regionally utilizing the check photographs.

It is possible for you to to see the expected lessons: frog, ship, automotive, and airplane:

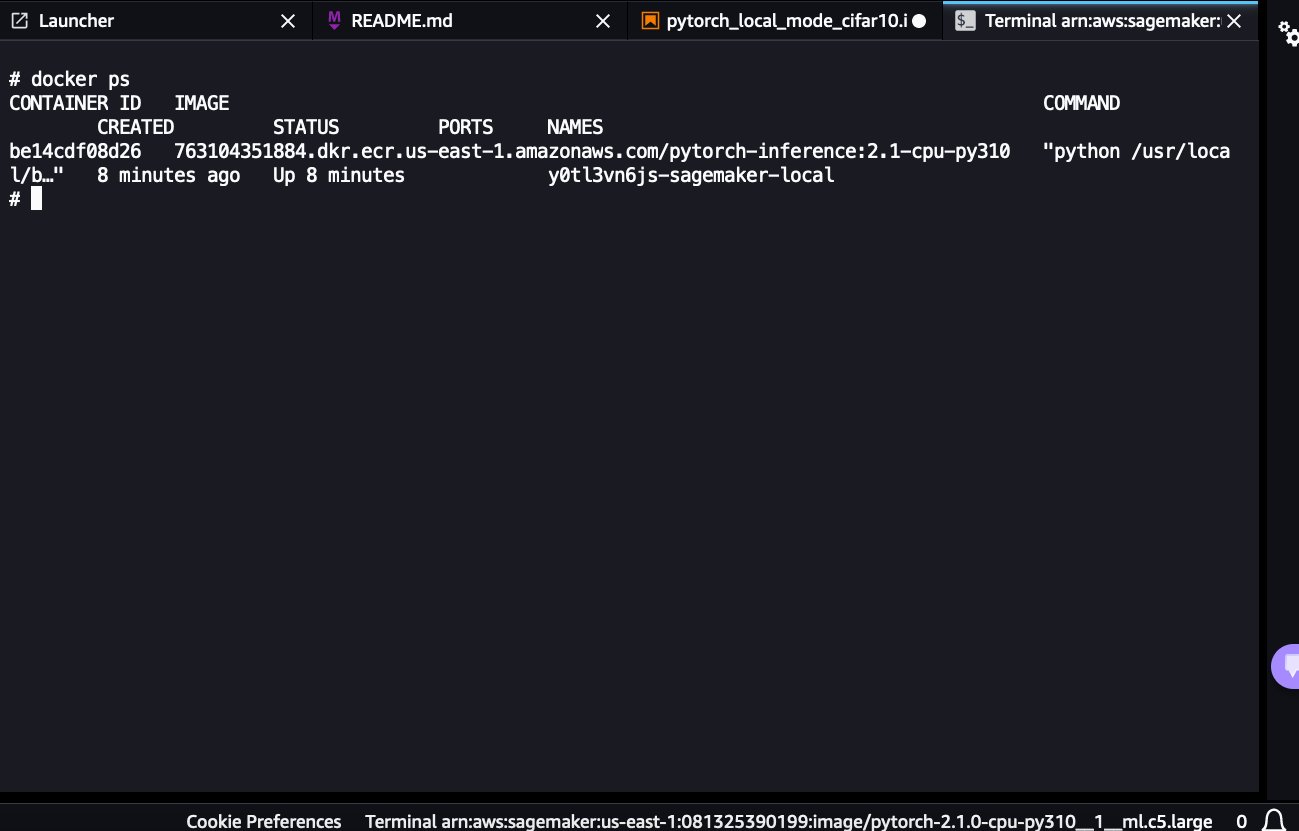

- As a result of the SageMaker Native endpoint continues to be up, navigate again to the open terminal window and record the working containers:

docker ps

You’ll have the ability to see the working pytorch-inference:2.1-cpu-py310 container backing the SageMaker endpoint.

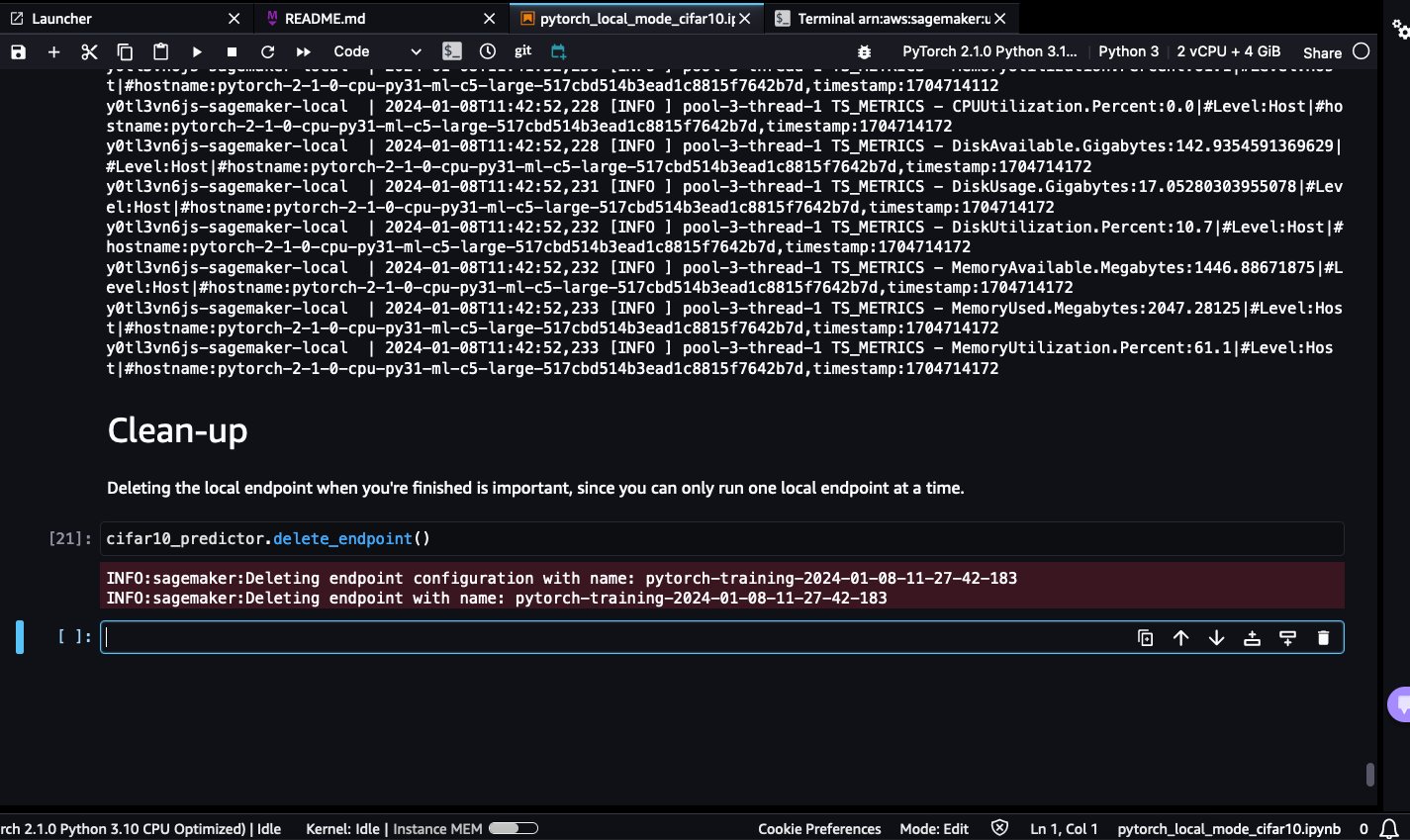

- To close down the SageMaker native endpoint and cease the working container, as a result of you possibly can solely run one native endpoint at a time, run the cleanup code.

- To ensure the Docker container is down, you possibly can navigate to the opened terminal window, run docker ps, and ensure there are not any working containers.

- Should you see a container working, run

docker cease <CONTAINER_ID>to cease it.

Ideas for utilizing SageMaker Native Mode

Should you’re utilizing SageMaker for the primary time, confer with Train machine learning models. To study extra about deploying fashions for inference with SageMaker, confer with Deploy models for inference.

Take into account the next suggestions:

- Print enter and output recordsdata and folders to grasp dataset and mannequin loading

- Use 1–2 epochs and small datasets for fast testing

- Pre-install dependencies in a Dockerfile to optimize setting setup

- Isolate serialization code in endpoints for debugging

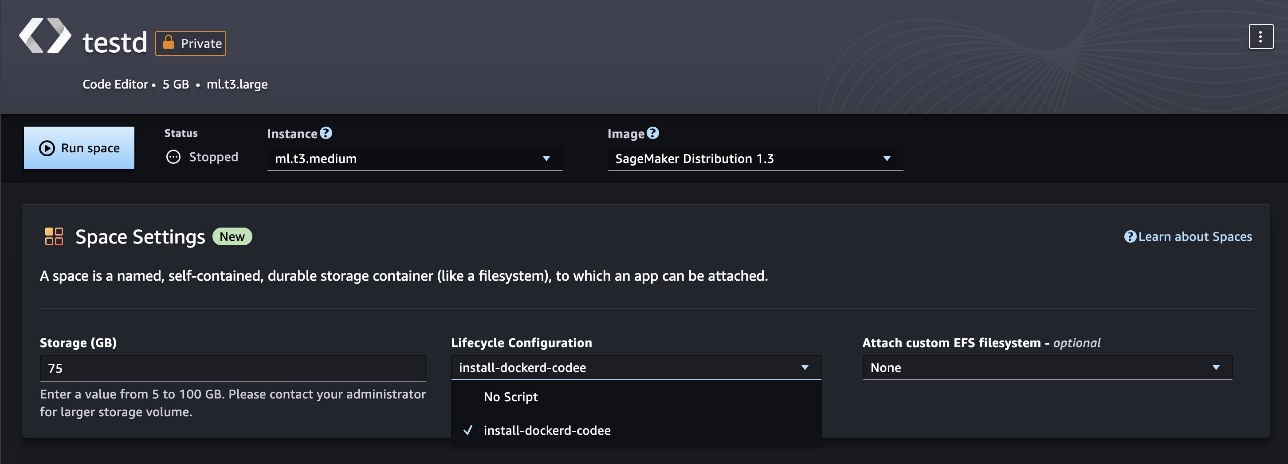

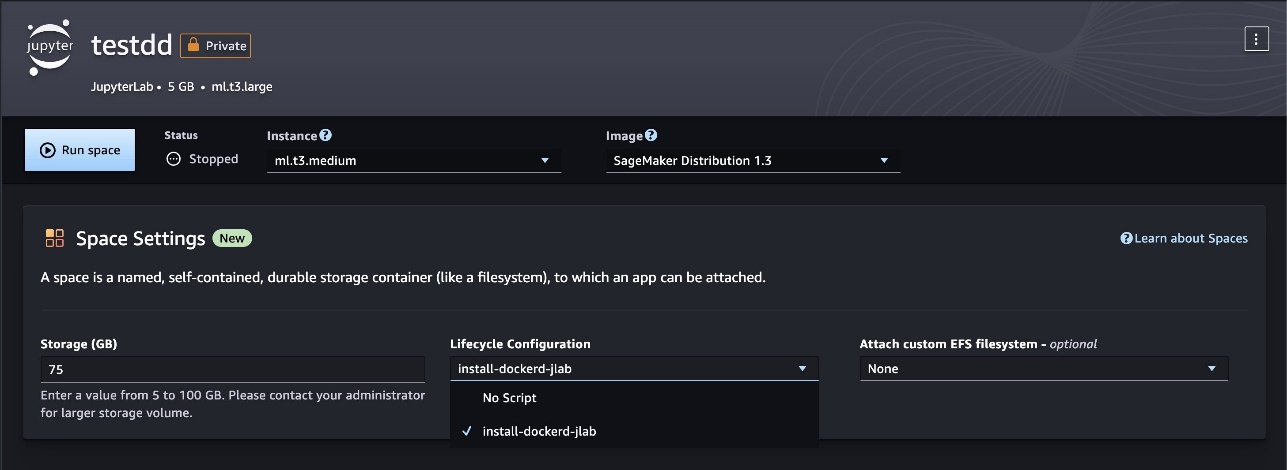

Configure Docker set up as a Lifecycle Configuration

You may outline the Docker set up course of as a Lifecycle Configuration (LCC) script to simplify setup every time a brand new SageMaker Studio area begins. LCCs are scripts that SageMaker runs throughout occasions like area creation. Check with the JupyterLab, Code Editor, or SageMaker Studio Classic LCC setup (utilizing docker install cli as reference) to study extra.

Construct and check customized Docker photographs in SageMaker Studio areas

On this step, you put in Docker contained in the JupyterLab (or Code Editor) app area and use Docker to construct, check, and publish customized Docker photographs with SageMaker Studio areas. Areas are used to handle the storage and useful resource wants of some SageMaker Studio purposes. Every area has a 1:1 relationship with an occasion of an utility. Each supported utility that’s created will get its personal area. To study extra about SageMaker areas, confer with Boost productivity on Amazon SageMaker Studio: Introducing JupyterLab Spaces and generative AI tools. Be sure to provision a brand new area with at the very least 30 GB of storage to permit enough storage for Docker photographs and artifacts.

Set up Docker inside an area

To put in the Docker CLI and Docker Compose plugin inside a JupyterLab area, run the instructions within the following GitHub repo. SageMaker Studio only supports Docker version 20.10.X.

Construct Docker photographs

To verify that Docker is put in and dealing inside your JupyterLab area, run the next code:

To construct a customized Docker picture inside a JupyterLab (or Code Editor) area, full the next steps:

- Create an empty Dockerfile:

contact Dockerfile

- Edit the Dockerfile with the next instructions, which create a easy flask net server picture from the bottom python:3.10.13-bullseye picture hosted on Docker Hub:

The next code reveals the contents of an instance flask utility file app.py:

Moreover, you possibly can replace the reference Dockerfile instructions to incorporate packages and artifacts of your selection.

- Construct a Docker picture utilizing the reference Dockerfile:

docker construct --network sagemaker --tag myflaskapp:v1 --file ./Dockerfile .

Embrace --network sagemaker in your docker construct command, in any other case the construct will fail. Containers can’t be run in Docker default bridge or customized Docker networks. Containers are run in identical community because the SageMaker Studio utility container. Customers can solely use sagemaker for the community identify.

- When your construct is full, validate if the picture exists. Re-tag the construct as an ECR picture and push. Should you run into permission points, run the aws ecr get-login-password… command and attempt to rerun the Docker push/pull:

Take a look at Docker photographs

Having Docker put in inside a JupyterLab (or Code Editor) SageMaker Studio area permits you to check pre-built or customized Docker photographs as containers (or containerized purposes). On this part, we use the docker run command to provision Docker containers inside a SageMaker Studio area to check containerized workloads like REST net providers and Python scripts. Full the next steps:

- If the check picture doesn’t exist, run docker pull to drag the picture into your native machine:

sagemaker-user@default:~$ docker pull 123456789012.dkr.ecr.us-east-2.amazonaws.com/myflaskapp:v1

- Should you encounter authentication points, run the next instructions:

aws ecr get-login-password --region area | docker login --username AWS --password-stdin aws_account_id.dkr.ecr.area.amazonaws.com

- Create a container to check your workload:

docker run --network sagemaker 123456789012.dkr.ecr.us-east-2.amazonaws.com/myflaskapp:v1

This spins up a brand new container occasion and runs the appliance outlined utilizing Docker’s ENTRYPOINT:

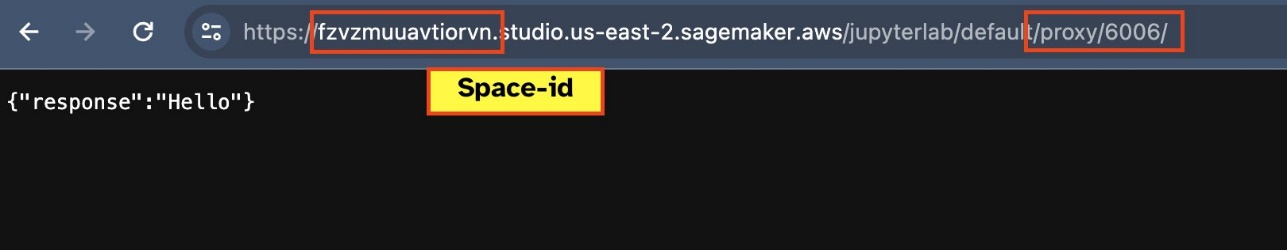

- To check in case your net endpoint is lively, navigate to the URL

https://<sagemaker-space-id>.studio.us-east-2.sagemaker.aws/jupyterlab/default/proxy/6006/.

You need to see a JSON response much like following screenshot.

Clear up

To keep away from incurring pointless fees, delete the assets that you simply created whereas working the examples on this put up:

- In your SageMaker Studio area, select Studio Basic within the navigation pane, then select Cease.

- In your SageMaker Studio area, select JupyterLab or Code Editor within the navigation pane, select your app, after which select Cease.

Conclusion

SageMaker Studio Native Mode and Docker assist empower builders to construct, check, and iterate on ML implementations quicker with out leaving their workspace. By offering prompt entry to check environments and outputs, these capabilities optimize workflows and enhance productiveness. Check out SageMaker Studio Native Mannequin and Docker assist utilizing our quick onboard feature, which lets you spin up a brand new area for single customers inside minutes. Share your ideas within the feedback part!

In regards to the Authors

Shweta Singh is a Senior Product Supervisor within the Amazon SageMaker Machine Studying (ML) platform crew at AWS, main SageMaker Python SDK. She has labored in a number of product roles in Amazon for over 5 years. She has a Bachelor of Science diploma in Pc Engineering and Masters of Science in Monetary Engineering, each from New York College

Shweta Singh is a Senior Product Supervisor within the Amazon SageMaker Machine Studying (ML) platform crew at AWS, main SageMaker Python SDK. She has labored in a number of product roles in Amazon for over 5 years. She has a Bachelor of Science diploma in Pc Engineering and Masters of Science in Monetary Engineering, each from New York College

Eitan Sela is a Generative AI and Machine Studying Specialist Options Architect ta AWS. He works with AWS clients to offer steering and technical help, serving to them construct and function Generative AI and Machine Studying options on AWS. In his spare time, Eitan enjoys jogging and studying the newest machine studying articles.

Eitan Sela is a Generative AI and Machine Studying Specialist Options Architect ta AWS. He works with AWS clients to offer steering and technical help, serving to them construct and function Generative AI and Machine Studying options on AWS. In his spare time, Eitan enjoys jogging and studying the newest machine studying articles.

Pranav Murthy is an AI/ML Specialist Options Architect at AWS. He focuses on serving to clients construct, prepare, deploy and migrate machine studying (ML) workloads to SageMaker. He beforehand labored within the semiconductor business creating massive pc imaginative and prescient (CV) and pure language processing (NLP) fashions to enhance semiconductor processes utilizing cutting-edge ML methods. In his free time, he enjoys enjoying chess and touring. Yow will discover Pranav on LinkedIn.

Pranav Murthy is an AI/ML Specialist Options Architect at AWS. He focuses on serving to clients construct, prepare, deploy and migrate machine studying (ML) workloads to SageMaker. He beforehand labored within the semiconductor business creating massive pc imaginative and prescient (CV) and pure language processing (NLP) fashions to enhance semiconductor processes utilizing cutting-edge ML methods. In his free time, he enjoys enjoying chess and touring. Yow will discover Pranav on LinkedIn.

Mufaddal Rohawala is a Software program Engineer at AWS. He works on the SageMaker Python SDK library for Amazon SageMaker. In his spare time, he enjoys journey, outside actions and is a soccer fan.

Mufaddal Rohawala is a Software program Engineer at AWS. He works on the SageMaker Python SDK library for Amazon SageMaker. In his spare time, he enjoys journey, outside actions and is a soccer fan.