Clever doc processing (IDP) with AWS helps automate data extraction from paperwork of various sorts and codecs, shortly and with excessive accuracy, with out the necessity for machine studying (ML) abilities. Sooner data extraction with excessive accuracy may also help you make high quality enterprise selections on time, whereas decreasing total prices. For extra data, discuss with Intelligent document processing with AWS AI services: Part 1.

Nonetheless, complexity arises when implementing real-world eventualities. Paperwork are sometimes despatched out of order, or they could be despatched as a mixed package deal with a number of type sorts. Orchestration pipelines must be created to introduce enterprise logic, and in addition account for various processing strategies relying on the kind of type inputted. These challenges are solely magnified as groups cope with massive doc volumes.

On this put up, we show the way to clear up these challenges utilizing Amazon Textract IDP CDK Constructs, a set of pre-built IDP constructs, to speed up the event of real-world doc processing pipelines. For our use case, we course of an Acord insurance coverage doc to allow straight-through processing, however you’ll be able to prolong this resolution to any use case, which we focus on later within the put up.

Acord doc processing at scale

Straight-through processing (STP) is a time period used within the monetary trade to explain the automation of a transaction from begin to end with out the necessity for handbook intervention. The insurance coverage trade makes use of STP to streamline the underwriting and claims course of. This entails the automated extraction of knowledge from insurance coverage paperwork reminiscent of functions, coverage paperwork, and claims varieties. Implementing STP will be difficult as a result of great amount of knowledge and the number of doc codecs concerned. Insurance coverage paperwork are inherently diverse. Historically, this course of entails manually reviewing every doc and getting into the info right into a system, which is time-consuming and liable to errors. This handbook strategy isn’t solely inefficient however may result in errors that may have a big affect on the underwriting and claims course of. That is the place IDP on AWS is available in.

To attain a extra environment friendly and correct workflow, insurance coverage firms can combine IDP on AWS into the underwriting and claims course of. With Amazon Textract and Amazon Comprehend, insurers can learn handwriting and totally different type codecs, making it simpler to extract data from varied varieties of insurance coverage paperwork. By implementing IDP on AWS into the method, STP turns into simpler to realize, decreasing the necessity for handbook intervention and dashing up the general course of.

This pipeline permits insurance coverage carriers to simply and effectively course of their industrial insurance coverage transactions, decreasing the necessity for handbook intervention and enhancing the general buyer expertise. We show the way to use Amazon Textract and Amazon Comprehend to mechanically extract information from industrial insurance coverage paperwork, reminiscent of Acord 140, Acord 125, Affidavit of Dwelling Possession, and Acord 126, and analyze the extracted information to facilitate the underwriting course of. These companies may also help insurance coverage carriers enhance the accuracy and pace of their STP processes, in the end offering a greater expertise for his or her prospects.

Answer overview

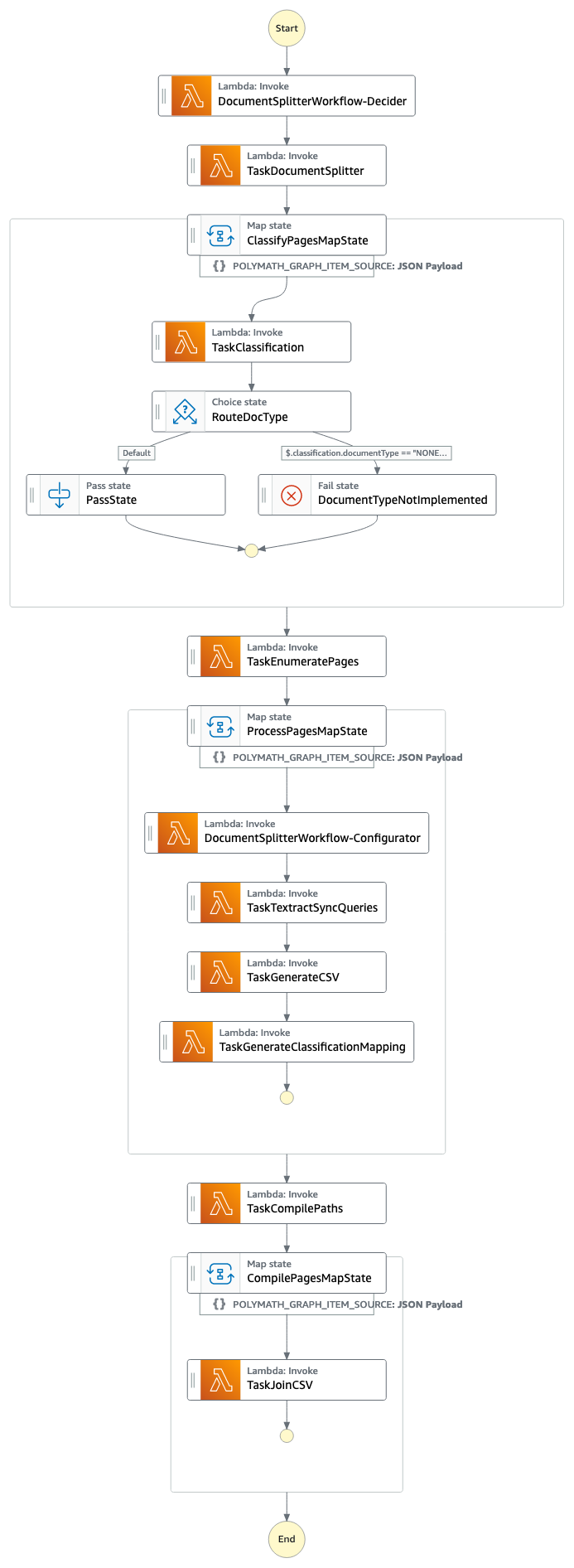

The answer is constructed utilizing the AWS Cloud Development Kit (AWS CDK), and consists of Amazon Comprehend for doc classification, Amazon Textract for doc extraction, Amazon DynamoDB for storage, AWS Lambda for software logic, and AWS Step Functions for workflow pipeline orchestration.

The pipeline consists of the next phases:

- Cut up the doc packages and classification of every type sort utilizing Amazon Comprehend.

- Run the processing pipelines for every type sort or web page of type with the suitable Amazon Textract API (Signature Detection, Desk Extraction, Types Extraction, or Queries).

- Carry out postprocessing of the Amazon Textract output into machine-readable format.

The next screenshot of the Step Capabilities workflow illustrates the pipeline.

Conditions

To get began with the answer, guarantee you may have the next:

- AWS CDK model 2 put in

- Docker put in and working in your machine

- Acceptable entry to Step Capabilities, DynamoDB, Lambda, Amazon Simple Queue Service (Amazon SQS), Amazon Textract, and Amazon Comprehend

Clone the GitHub repo

Begin by cloning the GitHub repository:

Create an Amazon Comprehend classification endpoint

We first want to offer an Amazon Comprehend classification endpoint.

For this put up, the endpoint detects the next doc lessons (guarantee naming is constant):

acord125acord126acord140property_affidavit

You’ll be able to create one through the use of the comprehend_acord_dataset.csv pattern dataset within the GitHub repository. To coach and create a customized classification endpoint utilizing the pattern dataset supplied, observe the directions in Train custom classifiers. If you need to make use of your individual PDF information, discuss with the primary workflow within the put up Intelligently split multi-form document packages with Amazon Textract and Amazon Comprehend.

After coaching your classifier and creating an endpoint, it is best to have an Amazon Comprehend customized classification endpoint ARN that appears like the next code:

Navigate to docsplitter/document_split_workflow.py and modify strains 27–28, which comprise comprehend_classifier_endpoint. Enter your endpoint ARN in line 28.

Set up dependencies

Now you put in the challenge dependencies:

Initialize the account and Area for the AWS CDK. This can create the Amazon Simple Storage Service (Amazon S3) buckets and roles for the AWS CDK device to retailer artifacts and have the ability to deploy infrastructure. See the next code:

Deploy the AWS CDK stack

When the Amazon Comprehend classifier and doc configuration desk are prepared, deploy the stack utilizing the next code:

Add the doc

Confirm that the stack is totally deployed.

Then within the terminal window, run the aws s3 cp command to add the doc to the DocumentUploadLocation for the DocumentSplitterWorkflow:

We have now created a pattern 12-page doc package deal that comprises the Acord 125, Acord 126, Acord 140, and Property Affidavit varieties. The next pictures present a 1-page excerpt from every doc.

All information within the varieties is artificial, and the Acord customary varieties are the property of the Acord Company, and are used right here for demonstration solely.

Run the Step Capabilities workflow

Now open the Step Perform workflow. You will get the Step Perform workflow hyperlink from the document_splitter_outputs.json file, the Step Capabilities console, or through the use of the next command:

Relying on the dimensions of the doc package deal, the workflow time will fluctuate. The pattern doc ought to take 1–2 minutes to course of. The next diagram illustrates the Step Capabilities workflow.

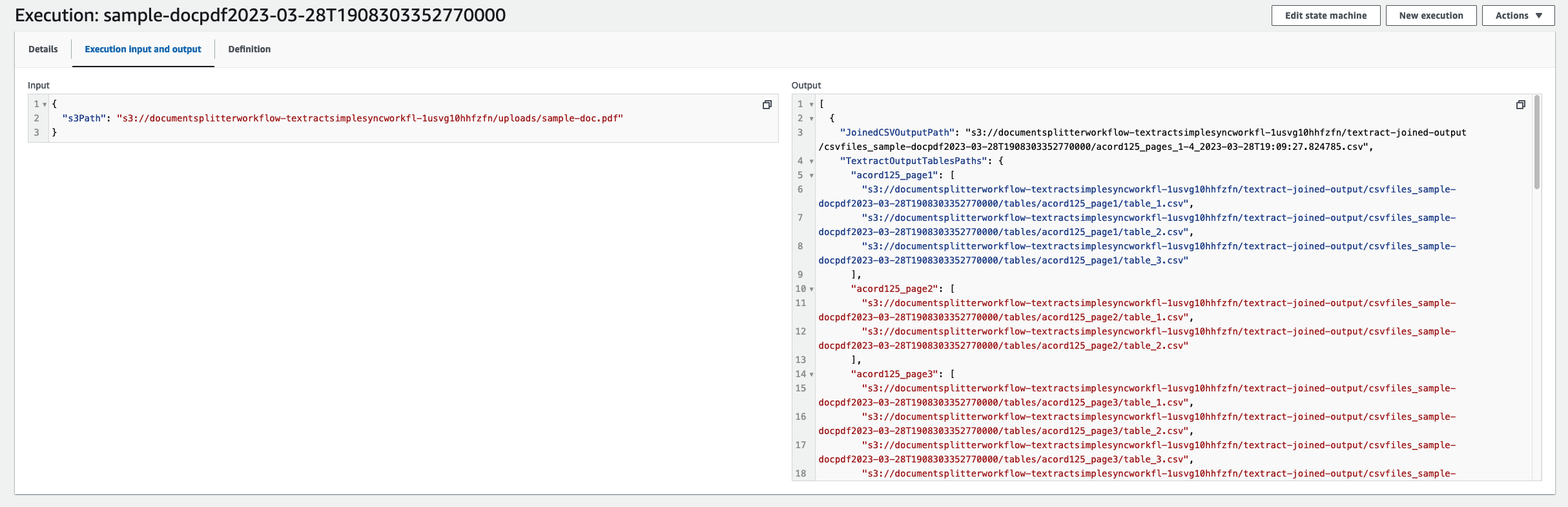

When your job is full, navigate to the enter and output code. From right here you will notice the machine-readable CSV information for every of the respective varieties.

To obtain these information, open getfiles.py. Set information to be the checklist outputted by the state machine run. You’ll be able to run this operate by working python3 getfiles.py. This can generate the csvfiles_<TIMESTAMP> folder, as proven within the following screenshot.

Congratulations, you may have now applied an end-to-end processing workflow for a industrial insurance coverage software.

Prolong the answer for any sort of type

On this put up, we demonstrated how we may use the Amazon Textract IDP CDK Constructs for a industrial insurance coverage use case. Nonetheless, you’ll be able to prolong these constructs for any type sort. To do that, we first retrain our Amazon Comprehend classifier to account for the brand new type sort, and alter the code as we did earlier.

For every of the shape sorts you educated, we should specify its queries and textract_features within the generate_csv.py file. This customizes every type sort’s processing pipeline through the use of the suitable Amazon Textract API.

Queries is a listing of queries. For instance, “What’s the major electronic mail deal with?” on web page 2 of the pattern doc. For extra data, see Queries.

textract_features is a listing of the Amazon Textract options you need to extract from the doc. It may be TABLES, FORMS, QUERIES, or SIGNATURES. For extra data, see FeatureTypes.

Navigate to generate_csv.py. Every doc sort wants its classification, queries, and textract_features configured by creating CSVRow situations.

For our instance we’ve 4 doc sorts: acord125, acord126, acord140, and property_affidavit. In within the following we need to use the FORMS and TABLES options on the acord paperwork, and the QUERIES and SIGNATURES options for the property affidavit.

Check with the GitHub repository for the way this was performed for the pattern industrial insurance coverage paperwork.

Clear up

To take away the answer, run the cdk destroy command. You’ll then be prompted to substantiate the deletion of the workflow. Deleting the workflow will delete all of the generated sources.

Conclusion

On this put up, we demonstrated how one can get began with Amazon Textract IDP CDK Constructs by implementing a straight-through processing state of affairs for a set of business Acord varieties. We additionally demonstrated how one can prolong the answer to any type sort with easy configuration adjustments. We encourage you to attempt the answer together with your respective paperwork. Please increase a pull request to the GitHub repo for any characteristic requests you’ll have. To study extra about IDP on AWS, discuss with our documentation.

Concerning the Authors

Raj Pathak is a Senior Options Architect and Technologist specializing in Monetary Companies (Insurance coverage, Banking, Capital Markets) and Machine Studying. He focuses on Pure Language Processing (NLP), Massive Language Fashions (LLM) and Machine Studying infrastructure and operations initiatives (MLOps).

Raj Pathak is a Senior Options Architect and Technologist specializing in Monetary Companies (Insurance coverage, Banking, Capital Markets) and Machine Studying. He focuses on Pure Language Processing (NLP), Massive Language Fashions (LLM) and Machine Studying infrastructure and operations initiatives (MLOps).

Aditi Rajnish is a Second-year software program engineering pupil at College of Waterloo. Her pursuits embrace pc imaginative and prescient, pure language processing, and edge computing. She can be captivated with community-based STEM outreach and advocacy. In her spare time, she will be discovered mountain climbing, taking part in the piano, or studying the way to bake the right scone.

Aditi Rajnish is a Second-year software program engineering pupil at College of Waterloo. Her pursuits embrace pc imaginative and prescient, pure language processing, and edge computing. She can be captivated with community-based STEM outreach and advocacy. In her spare time, she will be discovered mountain climbing, taking part in the piano, or studying the way to bake the right scone.

Enzo Staton is a Options Architect with a ardour for working with firms to extend their cloud data. He works carefully as a trusted advisor and trade specialist with prospects across the nation.

Enzo Staton is a Options Architect with a ardour for working with firms to extend their cloud data. He works carefully as a trusted advisor and trade specialist with prospects across the nation.