With Amazon Rekognition Custom Labels, you possibly can have Amazon Rekognition prepare a customized mannequin for object detection or picture classification particular to your online business wants. For instance, Rekognition Customized Labels can discover your emblem in social media posts, establish your merchandise on retailer cabinets, classify machine components in an meeting line, distinguish wholesome and contaminated vegetation, or detect animated characters in movies.

Growing a Rekognition Customized Labels mannequin to investigate pictures is a big enterprise that requires time, experience, and sources, usually taking months to finish. Moreover, it usually requires hundreds or tens of hundreds of hand-labeled pictures to supply the mannequin with sufficient knowledge to precisely make selections. Producing this knowledge can take months to collect and require massive groups of labelers to arrange it to be used in machine studying (ML).

With Rekognition Customized Labels, we maintain the heavy lifting for you. Rekognition Customized Labels builds off of the prevailing capabilities of Amazon Rekognition, which is already educated on tens of tens of millions of pictures throughout many classes. As a substitute of hundreds of pictures, you merely have to add a small set of coaching pictures (sometimes just a few hundred pictures or much less) which can be particular to your use case by way of our easy-to-use console. In case your pictures are already labeled, Amazon Rekognition can start coaching in only a few clicks. If not, you possibly can label them instantly throughout the Amazon Rekognition labeling interface, or use Amazon SageMaker Ground Truth to label them for you. After Amazon Rekognition begins coaching out of your picture set, it produces a customized picture evaluation mannequin for you in only a few hours. Behind the scenes, Rekognition Customized Labels robotically hundreds and inspects the coaching knowledge, selects the best ML algorithms, trains a mannequin, and supplies mannequin efficiency metrics. You may then use your customized mannequin by way of the Rekognition Customized Labels API and combine it into your functions.

Nonetheless, constructing a Rekognition Customized Labels mannequin and internet hosting it for real-time predictions entails a number of steps: making a challenge, creating the coaching and validation datasets, coaching the mannequin, evaluating the mannequin, after which creating an endpoint. After the mannequin is deployed for inference, you may need to retrain the mannequin when new knowledge turns into out there or if suggestions is acquired from real-world inference. Automating the entire workflow may also help scale back guide work.

On this put up, we present how you should use AWS Step Functions to construct and automate the workflow. Step Capabilities is a visible workflow service that helps builders use AWS companies to construct distributed functions, automate processes, orchestrate microservices, and create knowledge and ML pipelines.

Answer overview

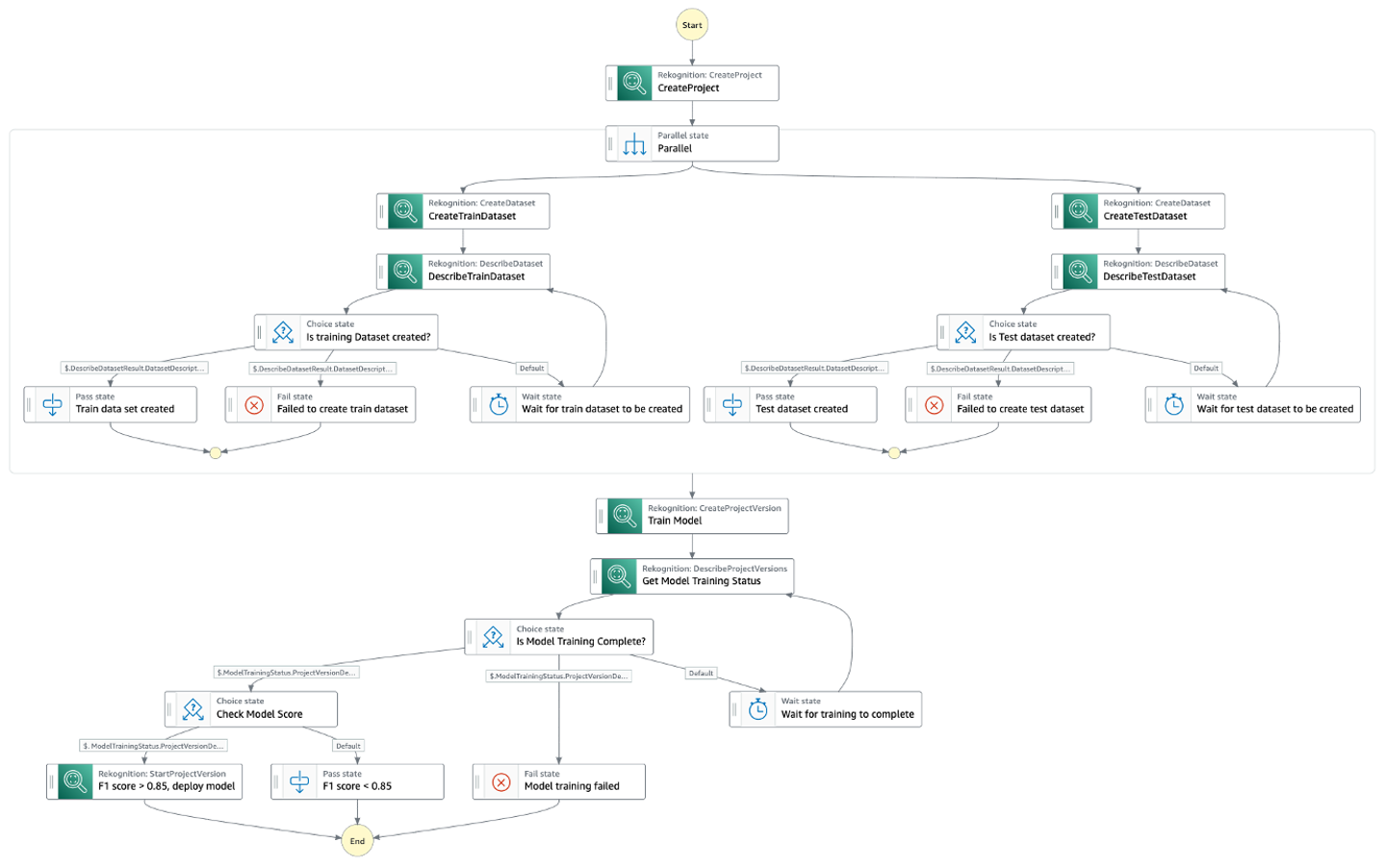

The Step Capabilities workflow is as follows:

- We first create an Amazon Rekognition challenge.

- In parallel, we create the coaching and the validation datasets utilizing current datasets. We will use the next strategies:

- Import a folder construction from Amazon Simple Storage Service (Amazon S3) with the folders representing the labels.

- Use an area laptop.

- Use Floor Reality.

- Create a dataset using an existing dataset with the AWS SDK.

- Create a dataset with a manifest file with the AWS SDK.

- After the datasets are created, we prepare a Customized Labels mannequin utilizing the CreateProjectVersion API. This might take from minutes to hours to finish.

- After the mannequin is educated, we consider the mannequin utilizing the F1 rating output from the earlier step. We use the F1 rating as our analysis metric as a result of it supplies a stability between precision and recall. You can too use precision or recall as your mannequin analysis metrics. For extra info on customized label analysis metrics, check with Metrics for evaluating your model.

- We then begin to use the mannequin for predictions if we’re happy with the F1 rating.

The next diagram illustrates the Step Capabilities workflow.

Stipulations

Earlier than deploying the workflow, we have to create the prevailing coaching and validation datasets. Full the next steps:

- First, create an Amazon Rekognition project.

- Then, create the training and validation datasets.

- Lastly, install the AWS SAM CLI.

Deploy the workflow

To deploy the workflow, clone the GitHub repository:

These instructions construct, package deal and deploy your utility to AWS, with a collection of prompts as defined within the repository.

Run the workflow

To check the workflow, navigate to the deployed workflow on the Step Capabilities console, then select Begin execution.

The workflow may take a couple of minutes to some hours to finish. If the mannequin passes the analysis standards, an endpoint for the mannequin is created in Amazon Rekognition. If the mannequin doesn’t cross the analysis standards or the coaching failed, the workflow fails. You may test the standing of the workflow on the Step Capabilities console. For extra info, check with Viewing and debugging executions on the Step Functions console.

Carry out mannequin predictions

To carry out predictions in opposition to the mannequin, you possibly can name the Amazon Rekognition DetectCustomLabels API. To invoke this API, the caller must have the required AWS Identity and Access Management (IAM) permissions. For extra particulars on performing predictions utilizing this API, check with Analyzing an image with a trained model.

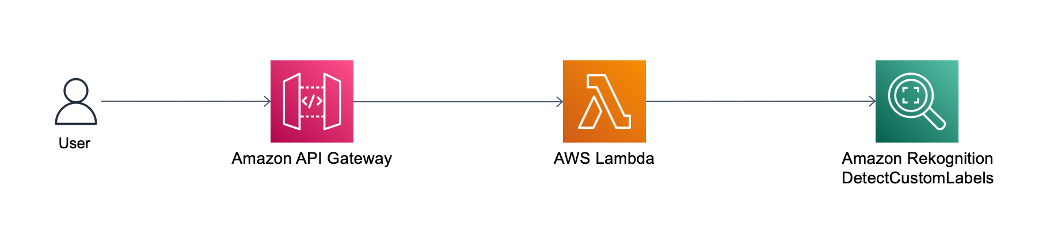

Nonetheless, if it’s worthwhile to expose the DetectCustomLabels API publicly, you possibly can entrance the DetectCustomLabels API with Amazon API Gateway. API Gateway is a totally managed service that makes it straightforward for builders to create, publish, keep, monitor, and safe APIs at any scale. API Gateway acts because the entrance door on your DetectCustomLabels API, as proven within the following structure diagram.

API Gateway forwards the consumer’s inference request to AWS Lambda. Lambda is a serverless, event-driven compute service that permits you to run code for just about any kind of utility or backend service with out provisioning or managing servers. Lambda receives the API request and calls the Amazon Rekognition DetectCustomLabels API with the required IAM permissions. For extra info on how one can arrange API Gateway with Lambda integration, check with Set up Lambda proxy integrations in API Gateway.

The next is an instance Lambda perform code to name the DetectCustomLabels API:

Clear up

To delete the workflow, use the AWS SAM CLI:

To delete the Rekognition Customized Labels mannequin, you possibly can both use the Amazon Rekognition console or the AWS SDK. For extra info, check with Deleting an Amazon Rekognition Custom Labels model.

Conclusion

On this put up, we walked by way of a Step Capabilities workflow to create a dataset after which prepare, consider, and use a Rekognition Customized Labels mannequin. The workflow permits utility builders and ML engineers to automate the customized label classification steps for any laptop imaginative and prescient use case. The code for the workflow is open-sourced.

For extra serverless studying sources, go to Serverless Land. To study extra about Rekognition customized labels, go to Amazon Rekognition Custom Labels.

Concerning the Writer

Veda Raman is a Senior Specialist Options Architect for machine studying based mostly in Maryland. Veda works with prospects to assist them architect environment friendly, safe and scalable machine studying functions. Veda is involved in serving to prospects leverage serverless applied sciences for Machine studying.

Veda Raman is a Senior Specialist Options Architect for machine studying based mostly in Maryland. Veda works with prospects to assist them architect environment friendly, safe and scalable machine studying functions. Veda is involved in serving to prospects leverage serverless applied sciences for Machine studying.