Massive language fashions (LLMs) with billions of parameters are at present on the forefront of pure language processing (NLP). These fashions are shaking up the sphere with their unimaginable talents to generate textual content, analyze sentiment, translate languages, and rather more. With entry to large quantities of information, LLMs have the potential to revolutionize the best way we work together with language. Though LLMs are able to performing varied NLP duties, they’re thought of generalists and never specialists. So as to practice an LLM to turn into an skilled in a specific area, fine-tuning is often required.

One of many main challenges in coaching and deploying LLMs with billions of parameters is their measurement, which may make it tough to suit them into single GPUs, the {hardware} generally used for deep studying. The sheer scale of those fashions requires high-performance computing sources, corresponding to specialised GPUs with massive quantities of reminiscence. Moreover, the dimensions of those fashions could make them computationally costly, which may considerably improve coaching and inference occasions.

On this put up, we reveal how we are able to use Amazon SageMaker JumpStart to simply fine-tune a big language textual content technology mannequin on a domain-specific dataset in the identical method you’ll practice and deploy any mannequin on Amazon SageMaker. Particularly, we present how one can fine-tune the GPT-J 6B language mannequin for monetary textual content technology utilizing each the JumpStart SDK and Amazon SageMaker Studio UI on a publicly accessible dataset of SEC filings.

JumpStart helps you shortly and simply get began with machine studying (ML) and gives a set of options for the commonest use instances that may be skilled and deployed readily with just some steps. All of the steps on this demo can be found within the accompanying pocket book Fine-tuning text generation GPT-J 6B model on a domain specific dataset.

Resolution overview

Within the following sections, we offer a step-by-step demonstration for fine-tuning an LLM for textual content technology duties by way of each the JumpStart Studio UI and Python SDK. Particularly, we talk about the next subjects:

- An summary of the SEC submitting knowledge within the monetary area that the mannequin is fine-tuned on

- An summary of the LLM GPT-J 6B mannequin we’ve got chosen to fine-tune

- An illustration of two other ways we are able to fine-tune the LLM utilizing JumpStart:

- Use JumpStart programmatically with the SageMaker Python SDK

- Entry JumpStart utilizing the Studio UI

- An analysis of the fine-tuned mannequin by evaluating it with the pre-trained mannequin with out fine-tuning

Effective-tuning refers back to the technique of taking a pre-trained language mannequin and coaching it for a special however associated activity utilizing particular knowledge. This method is also referred to as switch studying, which includes transferring the data discovered from one activity to a different. LLMs like GPT-J 6B are skilled on large quantities of unlabeled knowledge and may be fine-tuned on smaller datasets, making the mannequin carry out higher in a selected area.

For example of how efficiency improves when the mannequin is fine-tuned, think about asking it the next query:

“What drives gross sales development at Amazon?”

With out fine-tuning, the response could be:

“Amazon is the world’s largest on-line retailer. Additionally it is the world’s largest on-line market. Additionally it is the world”

With tremendous tuning, the response is:

“Gross sales development at Amazon is pushed primarily by elevated buyer utilization, together with elevated choice, decrease costs, and elevated comfort, and elevated gross sales by different sellers on our web sites.”

The advance from fine-tuning is clear.

We use monetary textual content from SEC filings to fine-tune a GPT-J 6B LLM for monetary purposes. Within the subsequent sections, we introduce the information and the LLM that will likely be fine-tuned.

SEC submitting dataset

SEC filings are important for regulation and disclosure in finance. Filings notify the investor neighborhood about corporations’ enterprise circumstances and the long run outlook of the businesses. The textual content in SEC filings covers all the gamut of an organization’s operations and enterprise circumstances. Due to their potential predictive worth, these filings are good sources of knowledge for traders. Though these SEC filings are publicly available to anybody, downloading parsed filings and developing a clear dataset with added options is a time-consuming train. We make this doable in just a few API calls within the JumpStart Industry SDK.

Utilizing the SageMaker API, we downloaded annual reviews (10-K filings; see How to Read a 10-K for extra data) for numerous corporations. We choose Amazon’s SEC submitting reviews for years 2021–2022 because the coaching knowledge to fine-tune the GPT-J 6B mannequin. Particularly, we concatenate the SEC submitting reviews of the corporate in several years right into a single textual content file apart from the “Administration Dialogue and Evaluation” part, which accommodates forward-looking statements by the corporate’s administration and are used because the validation knowledge.

The expectation is that after fine-tuning the GPT-J 6B textual content technology mannequin on the monetary SEC paperwork, the mannequin is ready to generate insightful monetary associated textual output, and due to this fact can be utilized to resolve a number of domain-specific NLP duties.

GPT-J 6B massive language mannequin

GPT-J 6B is an open-source, 6-billion-parameter mannequin launched by Eleuther AI. GPT-J 6B has been skilled on a big corpus of textual content knowledge and is able to performing varied NLP duties corresponding to textual content technology, textual content classification, and textual content summarization. Though this mannequin is spectacular on quite a lot of NLP duties with out the necessity for any fine-tuning, in lots of instances you will have to fine-tune the mannequin on a selected dataset and NLP duties you are attempting to resolve for. Use instances embrace customized chatbots, concept technology, entity extraction, classification, and sentiment evaluation.

Entry LLMs on SageMaker

Now that we’ve got recognized the dataset and the mannequin we’re going to fine-tune on, JumpStart gives two avenues to get began utilizing textual content technology fine-tuning: the SageMaker SDK and Studio.

Use JumpStart programmatically with the SageMaker SDK

We now go over an instance of how you should use the SageMaker JumpStart SDK to entry an LLM (GPT-J 6B) and fine-tune it on the SEC submitting dataset. Upon completion of fine-tuning, we are going to deploy the fine-tuned mannequin and make inference in opposition to it. All of the steps on this put up can be found within the accompanying pocket book: Fine-tuning text generation GPT-J 6B model on domain specific dataset.

On this instance, JumpStart makes use of the SageMaker Hugging Face Deep Learning Container (DLC) and DeepSpeed library to fine-tune the mannequin. The DeepSpeed library is designed to cut back computing energy and reminiscence use and to coach massive distributed fashions with higher parallelism on present pc {hardware}. It helps single node distributed coaching, using gradient checkpointing and mannequin parallelism to coach massive fashions on a single SageMaker coaching occasion with a number of GPUs. With JumpStart, we combine the DeepSpeed library with the SageMaker Hugging Face DLC for you and handle every thing underneath the hood. You may simply fine-tune the mannequin in your domain-specific dataset with out manually setting it up.

Effective-tune the pre-trained mannequin on domain-specific knowledge

To fine-tune a particular mannequin, we have to get that mannequin’s URI, in addition to the coaching script and the container picture used for coaching. To make issues simple, these three inputs rely solely on the mannequin identify, model (for an inventory of the accessible fashions, see Built-in Algorithms with pre-trained Model Table), and the kind of occasion you need to practice on. That is demonstrated within the following code snippet:

We retrieve the model_id comparable to the identical mannequin we need to use. On this case, we fine-tune huggingface-textgeneration1-gpt-j-6b.

Defining hyperparameters includes setting the values for varied parameters used throughout the coaching technique of an ML mannequin. These parameters can have an effect on the mannequin’s efficiency and accuracy. Within the following step, we set up the hyperparameters by using the default settings and specifying customized values for parameters corresponding to epochs and learning_rate:

JumpStart gives an intensive listing of hyperparameters accessible to tune. The next listing gives an summary of a part of the important thing hyperparameters utilized in fine-tuning the mannequin. For a full listing of hyperparameters, see the pocket book Fine-tuning text generation GPT-J 6B model on domain specific dataset.

- epochs – Specifies at most what number of epochs of the unique dataset will likely be iterated.

- learning_rate – Controls the step measurement or studying price of the optimization algorithm throughout coaching.

- eval_steps – Specifies what number of steps to run earlier than evaluating the mannequin on the validation set throughout coaching. The validation set is a subset of the information that isn’t used for coaching, however as an alternative is used to examine the efficiency of the mannequin on unseen knowledge.

- weight_decay – Controls the regularization power throughout mannequin coaching. Regularization is a method that helps forestall the mannequin from overfitting the coaching knowledge, which can lead to higher efficiency on unseen knowledge.

- fp16 – Controls whether or not to make use of fp16 16-bit (blended) precision coaching as an alternative of 32-bit coaching.

- evaluation_strategy – The analysis technique used throughout coaching.

- gradient_accumulation_steps – The variety of updates steps to build up the gradients for, earlier than performing a backward/replace cross.

For additional particulars concerning hyperparameters, consult with the official Hugging Face Trainer documentation.

Now you can fine-tune this JumpStart mannequin by yourself customized dataset utilizing the SageMaker SDK. We use the SEC submitting knowledge we described earlier. The practice and validation knowledge is hosted underneath train_dataset_s3_path and validation_dataset_s3_path. The supported format of the information consists of CSV, JSON, and TXT. For the CSV and JSON knowledge, the textual content knowledge is used from the column known as textual content or the primary column if no column known as textual content is discovered. As a result of that is for textual content technology fine-tuning, no floor reality labels are required. The next code is an SDK instance of tips on how to fine-tune the mannequin:

After we’ve got arrange the SageMaker Estimator with the required hyperparameters, we instantiate a SageMaker estimator and name the .match technique to begin fine-tuning our mannequin, passing it the Amazon Simple Storage Service (Amazon S3) URI for our coaching knowledge. As you possibly can see, the entry_point script offered is known as transfer_learning.py (the identical for different duties and fashions), and the enter knowledge channel handed to .match have to be named practice and validation.

JumpStart additionally helps hyperparameter optimization with SageMaker automatic model tuning. For particulars, see the instance notebook.

Deploy the fine-tuned mannequin

When coaching is full, you possibly can deploy your fine-tuned mannequin. To take action, all we have to get hold of is the inference script URI (the code that determines how the mannequin is used for inference as soon as deployed) and the inference container picture URI, which incorporates an applicable mannequin server to host the mannequin we selected. See the next code:

After a couple of minutes, our mannequin is deployed and we are able to get predictions from it in actual time!

Entry JumpStart by the Studio UI

One other approach to fine-tune and deploy JumpStart fashions is thru the Studio UI. This UI gives a low-code/no-code resolution to fine-tuning LLMs.

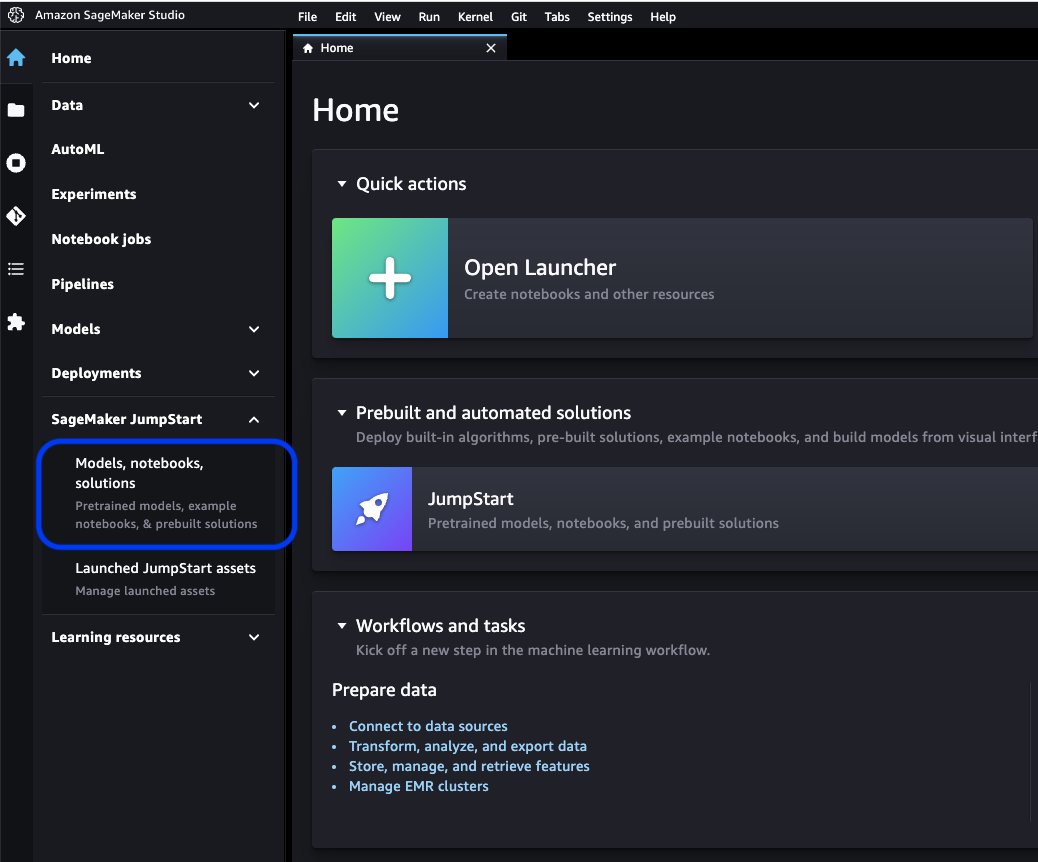

On the Studio console, select Fashions, notebooks, options underneath SageMaker JumpStart within the navigation pane.

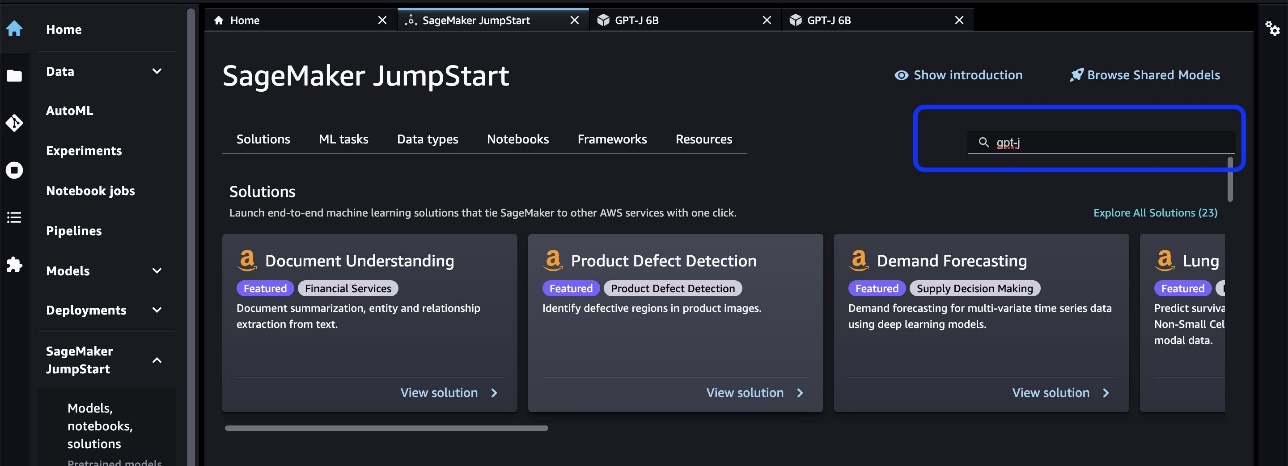

Within the search bar, seek for the mannequin you need to fine-tune and deploy.

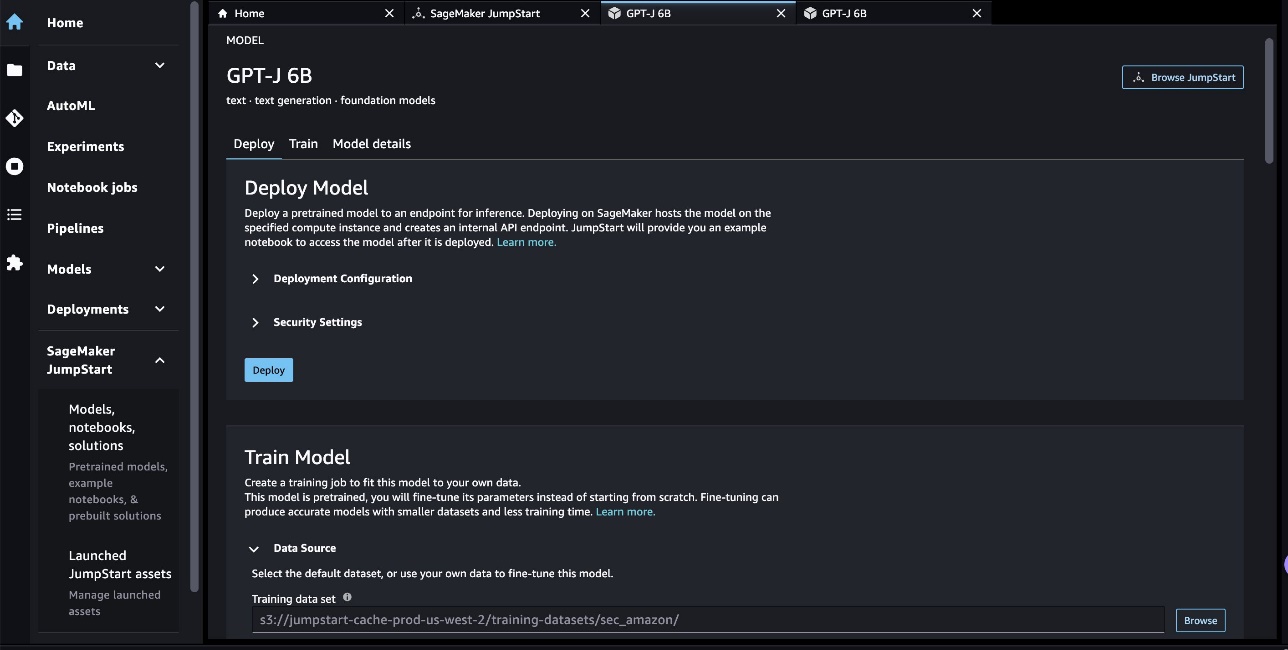

In our case, we selected the GPT-J 6B mannequin card. Right here we are able to instantly fine-tune or deploy the LLM.

Mannequin analysis

When evaluating an LLM, we are able to use perplexity (PPL). PPL is a standard measure of how effectively a language mannequin is ready to predict the subsequent phrase in a sequence. In easier phrases, it’s a approach to measure how effectively the mannequin can perceive and generate human-like language.

A decrease perplexity rating implies that the mannequin is proven to carry out higher at predicting the subsequent phrase. In sensible phrases, we are able to use perplexity to check completely different language fashions and decide which one performs higher on a given activity. We will additionally use it to trace the efficiency of a single mannequin over time. For extra particulars, consult with Perplexity of fixed-length models.

We consider the mannequin’s efficiency by a comparability of its pre- and post-fine-tuning efficiency. PPL is emitted within the coaching job’s Amazon CloudWatch logs. As well as, we have a look at the output generated by the mannequin in response to particular take a look at prompts.

| Analysis metric on the validation knowledge | Earlier than fine-tuning | After fine-tuning |

| Perplexity (PPL) | 8.147 | 1.437 |

The mannequin’s enhanced efficiency is demonstrated by two experiments:

- Textual content technology – We present that the output the fine-tuned GPT-J generates textual content that has a resemblance to the SEC dataset, indicating that it has discovered to emulate the language patterns and conventions of the monetary regulatory area

- Zero-shot studying – We reveal that the fine-tuned GPT-J exhibits considerably higher efficiency than the non-fine-tuned GPT-J on zero-shot query answering

For the textual content technology experiment, we use the next inference parameters to realize our outcomes: max_length of 400 (variety of tokens returned), num_return_sequences of 1 (variety of response textual content examples), top_k of 250 (choose from one of many high 250 doable continuations), top_p of 0.8 (choose solely from continuations which can be not less than 80% possible), do_sample is True (sampling), and temperature of 1 (excessive temperature is bigger randomness in responses). For extra particulars in regards to the parameters, consult with GenerationConfig. The next desk compares the fine-tuned GPT-J and non-fine-tuned one on a textual content completion activity.

| Inference instance | Output from GPT-J 6B Earlier than Effective-Tuning | Output from GPT-J 6B After Effective-Tuning |

| This Kind 10-Okay report exhibits that | This Kind 10-Okay report exhibits that: The Companys internet earnings attributable to the Firm for the 12 months ended December 31, 2016 was $3,923,000, or $0.21 per diluted share, in comparison with $3,818,000, or $0.21 per diluted share, for the 12 months ended December 31, 2015. The Companys internet earnings attributable to the Firm for the 12 months ended December 31, 2016 was $4,816,000, or $0.28 per diluted share, in comparison with $4,726,000, or $0.27 per diluted share, for the 12 months ended December 31, 2015. The Companys internet earnings attributable to the Firm for the 12 months ended December 31, 2016 was $5,828,000, or $0.34 per diluted share, in comparison with $5,716,000, or $0.33 per diluted share, for the 12 months ended December 31, 2015. On August 21, 2009, the Firm filed a Kind 10-Q for the quarter ended December 31, 2008. On August 21, 2009, the Firm filed a Kind 10-Q for the quarter ended September 30, 2008. On August 21, 2009, the Firm filed a Kind 10-Q for the quarter ended March 31, 2009. | This Kind 10-Okay report exhibits that:(1) The registrant’s identify, tackle, and phone quantity, are as follows:Amazon.com, Inc. c/o Ernst & Younger LLPSeattle, Washington Consideration: Company Secretary (2) For the aim of Article III of the Securities Change Act of 1934, the registrant’s identify and tackle are as follows:(3) The registrant’s Change Act reportable time interval is from and includingJanuary 1, 2020 to the current.(4) The registrant’s subjective evaluation is that the monetary statements, and different monetary data included on this report, fairlypresent in all materials respects the monetary situation, outcomes of operations and cashflows of the registrant as of, and for, the durations introduced on this report.(5) The registrant’s administration’s evaluation is that the monetary statements, and different monetary data included on this report, fairlypresent in all materials respects the monetary situation, outcomes of operations and cashflows of the registrant as of, and for, the durations introduced on this report./s/ Ernst & Younger LLPSeattle, WashingtonJanuary 29, 2020EX-31.1 5 amzn-20201231xex311.htm EX-31.1 DocumentExhibit 31.1CERTIFICATIONSI, Andrew R. Jassy, certify that:1. I’ve reviewed this Kind 10-Okay of Amazon.com, Inc.;2. Based mostly on my data, this report doesn’t comprise any unfaithful statementof a cloth truth or omit to state a cloth truth essential to make thestatements made, in mild of the circumstances underneath which such statementswere made, not deceptive with respect to the interval coated by this report; |

| We serve shoppers by | We serve shoppers by: Our Weblog Are you a enterprise proprietor trying to develop what you are promoting? Do you need to create a more practical and environment friendly enterprise? Do you need to learn to develop what you are promoting? When you answered “sure” to any of those questions, then it is advisable learn this text. Step one to rising what you are promoting is to grasp what you are promoting. It’s essential to have the ability to reply the next questions on what you are promoting: Who’re your clients? What’s your services or products? What’s your distinctive promoting proposition? What’s your advertising and marketing technique? What’s your gross sales forecast? What’s your gross sales forecast? What’s your gross sales forecast? What’s your gross sales forecast? What’s your gross sales forecast? | We serve shoppers by: Amazon.com, a retailer of bodily and digital merchandise; Amazon Internet Companies, which gives know-how infrastructure providers that allow nearly any sort of enterprise; Amazon Enterprise Options, which gives corporations with customized know-how and providers to allow higher enterprise processes and enhance effectivity and productiveness; and Audible, a digital audiobook writer. We serve builders and enterprises of all sizes by AWS, which provides a broad set of worldwide compute, storage, database, and different service choices. We additionally manufacture and promote digital units. We’ve organized our operations into two principal segments: North America and Worldwide. See Merchandise 8 of Half II, “”Monetary Statements and Supplementary Information-Observe 12-Phase Info.”” See Merchandise 7 of Half II, “”Administration’s Dialogue and Evaluation of Monetary Situation and Outcomes ofOperations-Outcomes of Operations-Supplemental Info”” for supplemental informationabout our internet gross sales.ConsumersWe serve shoppers by our on-line and bodily shops and concentrate on choice,worth, and comfort. We design our shops to allow lots of of thousands and thousands ofunique merchandise to be bought by us and by third events throughout dozens of productcategories. Prospects entry our choices by our web sites, cellular apps,Alexa, and bodily visiting our shops. We additionally manufacture and promote Kindledevices. We try to supply our clients the bottom costs doable throughlow on a regular basis product pricing and delivery provides, and to enhance ouroperating efficiencies in order that we are able to proceed to decrease costs for ourcustomers. We additionally present easy-to-use performance, quick and reliablefulfillment, and well timed customer support.As well as, we provide Amazon Prime, a membership program that features unlimitedfree delivery on over 100 million gadgets, entry to limitless streaming of tens ofthousands of flicks and TV episodes, and different advantages. |

| This 12 months we notably concentrate on | This 12 months we notably concentrate on: The function of the well being care system within the prevention of weight problems and diabetes The function of the well being care system within the prevention of weight problems and diabetes The function of the well being care system within the prevention of weight problems and diabetes The function of the well being care system within the prevention of weight problems and diabetes The function of the well being care system within the prevention of weight problems and diabetes The function of the well being care system within the prevention of weight problems and diabetes The function of the well being care system within the prevention of weight problems and diabetes | This 12 months we notably concentrate on: -Continued worldwide growth, together with the event of newbusinesses in present worldwide market segments, in addition to thedevelopment of recent worldwide market segments; -Increasing our buyer base, together with rising gross sales to existingcustomers and attracting new clients; -Creating new enterprise alternatives and getting into into commercialagreements, together with by licensing and different preparations, with othercompanies, or by the acquisition of different corporations; -Creating know-how that gives for enhanced customer support andefficiently delivers our services; -Creating new product and repair choices and incorporating these intoexisting and new product and repair choices; -Creating environment friendly and efficient programs for supporting our enterprise;and -Different enterprise and strategic initiatives. We imagine that providing low costs to our clients is prime to ourfuture success. A technique we provide decrease costs is thru free-shipping offersthat lead to a internet value to us in delivering merchandise, and thru membershipin Amazon Prime, which gives free-shipping on thousands and thousands of things andaccess to films and different content material. We additionally provide different promotions that enableus to show a decrease internet value of gross sales.We’ve organized our operations into two principal segments: North Americaand Worldwide. See Merchandise 8 of Half II, “”Monetary Statements andSupplementary Information-Observe 12-Phase Info.”” See Merchandise 7 of Half II,””Administration’s Dialogue and Evaluation of Monetary Situation and Outcomes ofOperations-Outcomes of Operations-Supplemental Info”” for supplementalinformation about our internet gross sales.ConsumersWe serve shoppers by our retail web sites and bodily shops and focuson choice, worth, and comfort. |

For the second experiment with a zero-shot query answering activity, we set the max_length to be a special worth (40, 200, and 40, respectively) primarily based on the output we count on from every query. The parameters of top_k, top_p, do_sample, temperature are set as 250, 0.8, False, and 0, respectively. We set temperature near 0 to regulate the randomness of the output, whereas within the textual content technology activity we set it near 1 to make the mannequin extra artistic. The next desk compares the outcomes. For every query, the output from fine-tuned GPT-J is extra correct than the non-fine-tuned one.

| Enter | Output from GPT-J 6B Earlier than Effective-Tuning | Output from GPT-J 6B After Effective-Tuning |

| What’s Merchandise 7 of the 10-Okay SEC submitting about?: | What’s Merchandise 7 of the 10-Okay SEC submitting about?: Merchandise 7 of the 10-Okay SEC submitting is the next: “The Firm has not obtained any materials hostile | Merchandise 7. Administration’s Dialogue and Evaluation of Monetary Situation and Outcomes ofOperations Ahead-Wanting Statements |

| How did the COVID-19 pandemic affect Amazon’s enterprise?: | How did the COVID-19 pandemic affect Amazon’s enterprise?: Amazon’s enterprise is being impacted by the COVID-19 pandemic in quite a lot of methods. The corporate is seeing a decline within the variety of Prime memberships, which is a giant a part of its enterprise. The corporate can also be seeing a decline within the variety of Prime memberships. Amazon can also be seeing a decline within the variety of orders positioned on its web site. The corporate can also be seeing a decline within the variety of people who find themselves purchasing on its web site. Amazon can also be seeing a decline within the variety of people who find themselves purchasing on its web site. Amazon can also be seeing a decline within the variety of people who find themselves purchasing on its web site. Amazon can also be seeing a decline within the variety of people who find themselves purchasing on its web site. Amazon can also be seeing a decline within the variety of people who find themselves purchasing | How did the COVID-19 pandemic affect Amazon’s enterprise?:The COVID-19 pandemic has impacted our enterprise in a number of methods. First, it has disrupted our provide chain, and it has affected buyer demand, which in flip has affected our gross sales and working outcomes. Second, it has elevated our spending on promoting and advertising and marketing, which will not be efficient in the long term. Third, it has elevated our spending on know-how infrastructure and computing capability, which will not be efficient in the long term. Fourth, it has elevated our spending on success and customer support, which will not be efficient in the long term. Lastly, it has elevated our spending on content material, which will not be efficient in the long term. See Merchandise 8 of Half II, “Monetary Statements and Supplementary Information — Observe 1 — Description of Enterprise and Accounting Insurance policies. |

| What drives gross sales development at Amazon?: | Amazon is the world’s largest on-line retailer. Additionally it is the world’s largest on-line market. Additionally it is the world’ | Gross sales development at Amazon is pushed primarily by elevated buyer utilization, together with elevated choice, decrease costs, and elevated comfort, and elevated gross sales by different sellers on our web sites. |

Clear up

To keep away from ongoing costs, delete the SageMaker inference endpoints. You may delete the endpoints by way of the SageMaker console or from the pocket book utilizing the next instructions:

Conclusion

JumpStart is a functionality in SageMaker that permits you to shortly get began with ML. JumpStart makes use of open-source, pre-trained fashions to resolve frequent ML issues like picture classification, object detection, textual content classification, sentence pair classification, and query answering.

On this put up, we confirmed you tips on how to fine-tune and deploy a pre-trained LLM (GPT-J 6B) for textual content technology primarily based on the SEC filling dataset. We demonstrated how the mannequin reworked right into a finance area skilled by present process the fine-tuning course of on simply two annual reviews of the corporate. This fine-tuning enabled the mannequin to generate content material with an understanding of economic subjects and better precision. Check out the answer by yourself and tell us the way it goes within the feedback.

Vital: This put up is for demonstrative functions solely. It isn’t monetary recommendation and shouldn’t be relied on as monetary or funding recommendation. The put up used fashions pre-trained on knowledge obtained from the SEC EDGAR database. You might be answerable for complying with EDGAR’s entry phrases and circumstances in case you use SEC knowledge.

To be taught extra about JumpStart, try the next posts:

In regards to the Authors

Dr. Xin Huang is a Senior Utilized Scientist for Amazon SageMaker JumpStart and Amazon SageMaker built-in algorithms. He focuses on creating scalable machine studying algorithms. His analysis pursuits are within the space of pure language processing, explainable deep studying on tabular knowledge, and sturdy evaluation of non-parametric space-time clustering. He has revealed many papers in ACL, ICDM, KDD conferences, and Royal Statistical Society: Sequence A.

Dr. Xin Huang is a Senior Utilized Scientist for Amazon SageMaker JumpStart and Amazon SageMaker built-in algorithms. He focuses on creating scalable machine studying algorithms. His analysis pursuits are within the space of pure language processing, explainable deep studying on tabular knowledge, and sturdy evaluation of non-parametric space-time clustering. He has revealed many papers in ACL, ICDM, KDD conferences, and Royal Statistical Society: Sequence A.

Marc Karp is an ML Architect with the Amazon SageMaker Service crew. He focuses on serving to clients design, deploy, and handle ML workloads at scale. In his spare time, he enjoys touring and exploring new locations.

Marc Karp is an ML Architect with the Amazon SageMaker Service crew. He focuses on serving to clients design, deploy, and handle ML workloads at scale. In his spare time, he enjoys touring and exploring new locations.

Dr. Sanjiv Das is an Amazon Scholar and the Terry Professor of Finance and Information Science at Santa Clara College. He holds post-graduate levels in Finance (M.Phil and PhD from New York College) and Pc Science (MS from UC Berkeley), and an MBA from the Indian Institute of Administration, Ahmedabad. Previous to being a tutorial, he labored within the derivatives enterprise within the Asia-Pacific area as a Vice President at Citibank. He works on multimodal machine studying within the space of economic purposes.

Dr. Sanjiv Das is an Amazon Scholar and the Terry Professor of Finance and Information Science at Santa Clara College. He holds post-graduate levels in Finance (M.Phil and PhD from New York College) and Pc Science (MS from UC Berkeley), and an MBA from the Indian Institute of Administration, Ahmedabad. Previous to being a tutorial, he labored within the derivatives enterprise within the Asia-Pacific area as a Vice President at Citibank. He works on multimodal machine studying within the space of economic purposes.

Arun Kumar Lokanatha is a Senior ML Options Architect with the Amazon SageMaker Service crew. He focuses on serving to clients construct, practice, and migrate ML manufacturing workloads to SageMaker at scale. He makes a speciality of deep studying, particularly within the space of NLP and CV. Exterior of labor, he enjoys working and mountain climbing.

Arun Kumar Lokanatha is a Senior ML Options Architect with the Amazon SageMaker Service crew. He focuses on serving to clients construct, practice, and migrate ML manufacturing workloads to SageMaker at scale. He makes a speciality of deep studying, particularly within the space of NLP and CV. Exterior of labor, he enjoys working and mountain climbing.