The seeds of a machine studying (ML) paradigm shift have existed for many years, however with the prepared availability of scalable compute capability, an enormous proliferation of knowledge, and the speedy development of ML applied sciences, prospects throughout industries are remodeling their companies. Only recently, generative AI purposes like ChatGPT have captured widespread consideration and creativeness. We’re actually at an thrilling inflection level within the widespread adoption of ML, and we imagine most buyer experiences and purposes shall be reinvented with generative AI.

AI and ML have been a spotlight for Amazon for over 20 years, and most of the capabilities prospects use with Amazon are pushed by ML. Our e-commerce suggestions engine is pushed by ML; the paths that optimize robotic selecting routes in our achievement facilities are pushed by ML; and our provide chain, forecasting, and capability planning are knowledgeable by ML. Prime Air (our drones) and the pc imaginative and prescient expertise in Amazon Go (our bodily retail expertise that lets shoppers choose gadgets off a shelf and depart the shop with out having to formally try) use deep studying. Alexa, powered by greater than 30 completely different ML techniques, helps prospects billions of occasions every week to handle sensible houses, store, get data and leisure, and extra. We now have hundreds of engineers at Amazon dedicated to ML, and it’s an enormous a part of our heritage, present ethos, and future.

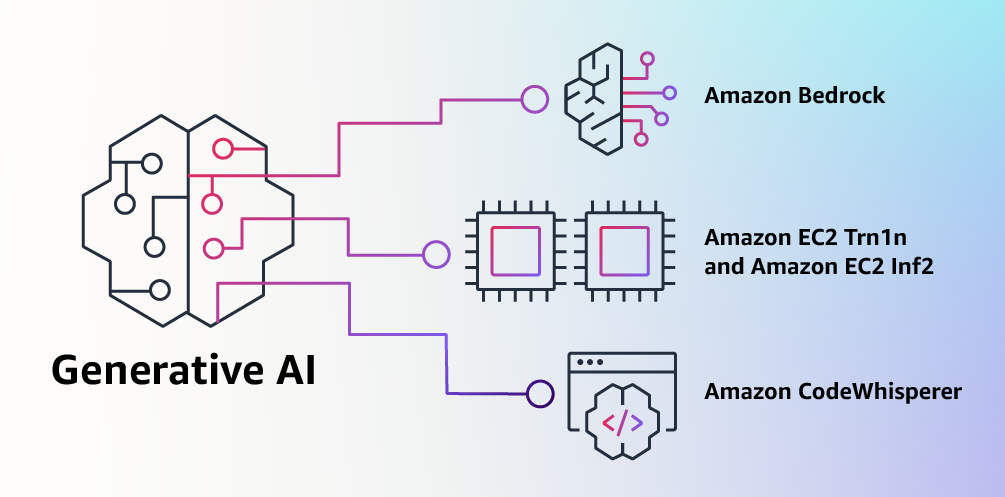

At AWS, we have now performed a key position in democratizing ML and making it accessible to anybody who desires to make use of it, together with greater than 100,000 prospects of all sizes and industries. AWS has the broadest and deepest portfolio of AI and ML providers in any respect three layers of the stack. We’ve invested and innovated to supply essentially the most performant, scalable infrastructure for cost-effective ML coaching and inference; developed Amazon SageMaker, which is the best manner for all builders to construct, practice, and deploy fashions; and launched a variety of providers that enable prospects so as to add AI capabilities like picture recognition, forecasting, and clever search to purposes with a easy API name. This is the reason prospects like Intuit, Thomson Reuters, AstraZeneca, Ferrari, Bundesliga, 3M, and BMW, in addition to hundreds of startups and authorities companies all over the world, are remodeling themselves, their industries, and their missions with ML. We take the identical democratizing method to generative AI: we work to take these applied sciences out of the realm of analysis and experiments and prolong their availability far past a handful of startups and huge, well-funded tech firms. That’s why as we speak I’m excited to announce a number of new improvements that can make it simple and sensible for our prospects to make use of generative AI of their companies.

Constructing with Generative AI on AWS

Generative AI and basis fashions

Generative AI is a sort of AI that may create new content material and concepts, together with conversations, tales, pictures, movies, and music. Like all AI, generative AI is powered by ML fashions—very massive fashions which are pre-trained on huge quantities of knowledge and generally known as Basis Fashions (FMs). Current developments in ML (particularly the invention of the transformer-based neural community structure) have led to the rise of fashions that comprise billions of parameters or variables. To offer a way for the change in scale, the biggest pre-trained mannequin in 2019 was 330M parameters. Now, the biggest fashions are greater than 500B parameters—a 1,600x improve in dimension in only a few years. Immediately’s FMs, comparable to the massive language fashions (LLMs) GPT3.5 or BLOOM, and the text-to-image mannequin Secure Diffusion from Stability AI, can carry out a variety of duties that span a number of domains, like writing weblog posts, producing pictures, fixing math issues, partaking in dialog, and answering questions based mostly on a doc. The scale and general-purpose nature of FMs make them completely different from conventional ML fashions, which generally carry out particular duties, like analyzing textual content for sentiment, classifying pictures, and forecasting developments.

FMs can carry out so many extra duties as a result of they comprise such a lot of parameters that make them able to studying complicated ideas. And thru their pre-training publicity to internet-scale knowledge in all its numerous kinds and myriad of patterns, FMs be taught to use their information inside a variety of contexts. Whereas the capabilities and ensuing potentialities of a pre-trained FM are wonderful, prospects get actually excited as a result of these typically succesful fashions will also be personalized to carry out domain-specific capabilities which are differentiating to their companies, utilizing solely a small fraction of the information and compute required to coach a mannequin from scratch. The personalized FMs can create a novel buyer expertise, embodying the corporate’s voice, type, and providers throughout all kinds of client industries, like banking, journey, and healthcare. As an illustration, a monetary agency that should auto-generate a day by day exercise report for inner circulation utilizing all of the related transactions can customise the mannequin with proprietary knowledge, which is able to embrace previous reviews, in order that the FM learns how these reviews ought to learn and what knowledge was used to generate them.

The potential of FMs is extremely thrilling. However, we’re nonetheless within the very early days. Whereas ChatGPT has been the primary broad generative AI expertise to catch prospects’ consideration, most folk learning generative AI have shortly come to understand that a number of firms have been engaged on FMs for years, and there are a number of completely different FMs out there—every with distinctive strengths and traits. As we’ve seen over time with fast-moving applied sciences, and within the evolution of ML, issues change quickly. We anticipate new architectures to come up sooner or later, and this variety of FMs will set off a wave of innovation. We’re already seeing new software experiences by no means seen earlier than. AWS prospects have requested us how they’ll shortly make the most of what’s on the market as we speak (and what’s doubtless coming tomorrow) and shortly start utilizing FMs and generative AI inside their companies and organizations to drive new ranges of productiveness and rework their choices.

Asserting Amazon Bedrock and Amazon Titan fashions, the best approach to construct and scale generative AI purposes with FMs

Prospects have instructed us there are just a few large issues standing of their manner as we speak. First, they want an easy approach to discover and entry high-performing FMs that give excellent outcomes and are best-suited for his or her functions. Second, prospects need integration into purposes to be seamless, with out having to handle enormous clusters of infrastructure or incur massive prices. Lastly, prospects need it to be simple to take the bottom FM, and construct differentiated apps utilizing their very own knowledge (a bit knowledge or loads). For the reason that knowledge prospects wish to use for personalisation is extremely useful IP, they want it to remain utterly protected, safe, and personal throughout that course of, and so they need management over how their knowledge is shared and used.

We took all of that suggestions from prospects, and as we speak we’re excited to announce Amazon Bedrock, a brand new service that makes FMs from AI21 Labs, Anthropic, Stability AI, and Amazon accessible by way of an API. Bedrock is the best manner for purchasers to construct and scale generative AI-based purposes utilizing FMs, democratizing entry for all builders. Bedrock will supply the power to entry a variety of highly effective FMs for textual content and pictures—together with Amazon’s Titan FMs, which encompass two new LLMs we’re additionally saying as we speak—via a scalable, dependable, and safe AWS managed service. With Bedrock’s serverless expertise, prospects can simply discover the suitable mannequin for what they’re attempting to get achieved, get began shortly, privately customise FMs with their very own knowledge, and simply combine and deploy them into their purposes utilizing the AWS instruments and capabilities they’re accustomed to (together with integrations with Amazon SageMaker ML options like Experiments to check completely different fashions and Pipelines to handle their FMs at scale) with out having to handle any infrastructure.

Bedrock prospects can select from a number of the most cutting-edge FMs out there as we speak. This consists of the Jurassic-2 household of multilingual LLMs from AI21 Labs, which comply with pure language directions to generate textual content in Spanish, French, German, Portuguese, Italian, and Dutch. Claude, Anthropic’s LLM, can carry out all kinds of conversational and textual content processing duties and relies on Anthropic’s intensive analysis into coaching trustworthy and accountable AI techniques. Bedrock additionally makes it simple to entry Stability AI’s suite of text-to-image basis fashions, together with Secure Diffusion (the preferred of its sort), which is able to producing distinctive, practical, high-quality pictures, artwork, logos, and designs.

Some of the essential capabilities of Bedrock is how simple it’s to customise a mannequin. Prospects merely level Bedrock at just a few labeled examples in Amazon S3, and the service can fine-tune the mannequin for a specific job with out having to annotate massive volumes of knowledge (as few as 20 examples is sufficient). Think about a content material advertising supervisor who works at a number one vogue retailer and must develop recent, focused advert and marketing campaign copy for an upcoming new line of purses. To do that, they supply Bedrock just a few labeled examples of their greatest performing taglines from previous campaigns, together with the related product descriptions, and Bedrock will robotically begin producing efficient social media, show advert, and internet copy for the brand new purses. Not one of the buyer’s knowledge is used to coach the underlying fashions, and since all knowledge is encrypted and doesn’t depart a buyer’s Digital Personal Cloud (VPC), prospects can belief that their knowledge will stay personal and confidential.

Bedrock is now in restricted preview, and prospects like Coda are enthusiastic about how briskly their improvement groups have gotten up and working. Shishir Mehrotra, Co-founder and CEO of Coda, says, “As a longtime glad AWS buyer, we’re enthusiastic about how Amazon Bedrock can convey high quality, scalability, and efficiency to Coda AI. Since all our knowledge is already on AWS, we’re capable of shortly incorporate generative AI utilizing Bedrock, with all the safety and privateness we have to defend our knowledge built-in. With over tens of hundreds of groups working on Coda, together with massive groups like Uber, the New York Occasions, and Sq., reliability and scalability are actually essential.”

We now have been previewing Amazon’s new Titan FMs with just a few prospects earlier than we make them out there extra broadly within the coming months. We’ll initially have two Titan fashions. The primary is a generative LLM for duties comparable to summarization, textual content era (for instance, making a weblog submit), classification, open-ended Q&A, and knowledge extraction. The second is an embeddings LLM that interprets textual content inputs (phrases, phrases or probably massive items of textual content) into numerical representations (generally known as embeddings) that comprise the semantic which means of the textual content. Whereas this LLM won’t generate textual content, it’s helpful for purposes like personalization and search as a result of by evaluating embeddings the mannequin will produce extra related and contextual responses than phrase matching. The truth is, Amazon.com’s product search functionality makes use of an identical embeddings mannequin amongst others to assist prospects discover the merchandise they’re in search of. To proceed supporting greatest practices within the accountable use of AI, Titan FMs are constructed to detect and take away dangerous content material within the knowledge, reject inappropriate content material within the consumer enter, and filter the fashions’ outputs that comprise inappropriate content material (comparable to hate speech, profanity, and violence).

Bedrock makes the facility of FMs accessible to firms of all sizes in order that they’ll speed up using ML throughout their organizations and construct their very own generative AI purposes as a result of will probably be simple for all builders. We expect Bedrock shall be an enormous step ahead in democratizing FMs, and our companions like Accenture, Deloitte, Infosys, and Slalom are constructing practices to assist enterprises go quicker with generative AI. Impartial Software program Distributors (ISVs) like C3 AI and Pega are excited to leverage Bedrock for simple entry to its nice number of FMs with the entire safety, privateness, and reliability they anticipate from AWS.

Asserting the final availability of Amazon EC2 Trn1n cases powered by AWS Trainium and Amazon EC2 Inf2 cases powered by AWS Inferentia2, essentially the most cost-effective cloud infrastructure for generative AI

No matter prospects are attempting to do with FMs—working them, constructing them, customizing them—they want essentially the most performant, cost-effective infrastructure that’s purpose-built for ML. During the last 5 years, AWS has been investing in our personal silicon to push the envelope on efficiency and value efficiency for demanding workloads like ML coaching and Inference, and our AWS Trainium and AWS Inferentia chips supply the bottom value for coaching fashions and working inference within the cloud. This capability to maximise efficiency and management prices by selecting the optimum ML infrastructure is why main AI startups, like AI21 Labs, Anthropic, Cohere, Grammarly, Hugging Face, Runway, and Stability AI run on AWS.

Trn1 cases, powered by Trainium, can ship as much as 50% financial savings on coaching prices over another EC2 occasion, and are optimized to distribute coaching throughout a number of servers linked with 800 Gbps of second-generation Elastic Material Adapter (EFA) networking. Prospects can deploy Trn1 cases in UltraClusters that may scale as much as 30,000 Trainium chips (greater than 6 exaflops of compute) positioned in the identical AWS Availability Zone with petabit scale networking. Many AWS prospects, together with Helixon, Cash Ahead, and the Amazon Search workforce, use Trn1 cases to assist cut back the time required to coach the largest-scale deep studying fashions from months to weeks and even days whereas decreasing their prices. 800 Gbps is lots of bandwidth, however we have now continued to innovate to ship extra, and as we speak we’re saying the basic availability of recent, network-optimized Trn1n cases, which provide 1600 Gbps of community bandwidth and are designed to ship 20% greater efficiency over Trn1 for big, network-intensive fashions.

Immediately, more often than not and cash spent on FMs goes into coaching them. It is because many shoppers are solely simply beginning to deploy FMs into manufacturing. Nevertheless, sooner or later, when FMs are deployed at scale, most prices shall be related to working the fashions and doing inference. When you sometimes practice a mannequin periodically, a manufacturing software will be always producing predictions, generally known as inferences, doubtlessly producing thousands and thousands per hour. And these predictions must occur in real-time, which requires very low-latency and high-throughput networking. Alexa is a good instance with thousands and thousands of requests coming in each minute, which accounts for 40% of all compute prices.

As a result of we knew that many of the future ML prices would come from working inferences, we prioritized inference-optimized silicon once we began investing in new chips just a few years in the past. In 2018, we introduced Inferentia, the primary purpose-built chip for inference. Yearly, Inferentia helps Amazon run trillions of inferences and has saved firms like Amazon over 100 million {dollars} in capital expense already. The outcomes are spectacular, and we see many alternatives to maintain innovating as workloads will solely improve in dimension and complexity as extra prospects combine generative AI into their purposes.

That’s why we’re saying as we speak the basic availability of Inf2 cases powered by AWS Inferentia2, that are optimized particularly for large-scale generative AI purposes with fashions containing a whole lot of billions of parameters. Inf2 cases ship as much as 4x greater throughput and as much as 10x decrease latency in comparison with the prior era Inferentia-based cases. In addition they have ultra-high-speed connectivity between accelerators to assist large-scale distributed inference. These capabilities drive as much as 40% higher inference value efficiency than different comparable Amazon EC2 cases and the bottom value for inference within the cloud. Prospects like Runway are seeing as much as 2x greater throughput with Inf2 than comparable Amazon EC2 cases for a few of their fashions. This high-performance, low-cost inference will allow Runway to introduce extra options, deploy extra complicated fashions, and in the end ship a greater expertise for the thousands and thousands of creators utilizing Runway.

Asserting the final availability of Amazon CodeWhisperer, free for particular person builders

We all know that constructing with the suitable FMs and working Generative AI purposes at scale on essentially the most performant cloud infrastructure shall be transformative for purchasers. The brand new wave of experiences can even be transformative for customers. With generative AI built-in, customers will be capable of have extra pure and seamless interactions with purposes and techniques. Consider how we are able to unlock our cellphones simply by taking a look at them, while not having to know something concerning the highly effective ML fashions that make this characteristic attainable.

One space the place we foresee using generative AI rising quickly is in coding. Software program builders as we speak spend a big quantity of their time writing code that’s fairly simple and undifferentiated. In addition they spend lots of time attempting to maintain up with a posh and ever-changing instrument and expertise panorama. All of this leaves builders much less time to develop new, progressive capabilities and providers. Builders attempt to overcome this by copying and modifying code snippets from the net, which can lead to inadvertently copying code that doesn’t work, incorporates safety vulnerabilities, or doesn’t observe utilization of open supply software program. And, in the end, looking and copying nonetheless takes time away from the good things.

Generative AI can take this heavy lifting out of the equation by “writing” a lot of the undifferentiated code, permitting builders to construct quicker whereas releasing them as much as deal with the extra inventive features of coding. This is the reason, final 12 months, we introduced the preview of Amazon CodeWhisperer, an AI coding companion that makes use of a FM underneath the hood to radically enhance developer productiveness by producing code options in real-time based mostly on builders’ feedback in pure language and prior code of their Built-in Growth Setting (IDE). Builders can merely inform CodeWhisperer to do a job, comparable to “parse a CSV string of songs” and ask it to return a structured checklist based mostly on values comparable to artist, title, and highest chart rank. CodeWhisperer gives a productiveness enhance by producing a complete operate that parses the string and returns the checklist as specified. Developer response to the preview has been overwhelmingly constructive, and we proceed to imagine that serving to builders code may find yourself being one of the vital highly effective makes use of of generative AI we’ll see within the coming years. Through the preview, we ran a productiveness problem, and individuals who used CodeWhisperer accomplished duties 57% quicker, on common, and had been 27% extra more likely to full them efficiently than those that didn’t use CodeWhisperer. It is a large leap ahead in developer productiveness, and we imagine that is solely the start.

Immediately, we’re excited to announce the basic availability of Amazon CodeWhisperer for Python, Java, JavaScript, TypeScript, and C#—plus ten new languages, together with Go, Kotlin, Rust, PHP, and SQL. CodeWhisperer will be accessed from IDEs comparable to VS Code, IntelliJ IDEA, AWS Cloud9, and lots of extra by way of the AWS Toolkit IDE extensions. CodeWhisperer can also be out there within the AWS Lambda console. Along with studying from the billions of traces of publicly out there code, CodeWhisperer has been skilled on Amazon code. We imagine CodeWhisperer is now essentially the most correct, quickest, and most safe approach to generate code for AWS providers, together with Amazon EC2, AWS Lambda, and Amazon S3.

Builders aren’t actually going to be extra productive if code advised by their generative AI instrument incorporates hidden safety vulnerabilities or fails to deal with open supply responsibly. CodeWhisperer is the one AI coding companion with built-in safety scanning (powered by automated reasoning) for locating and suggesting remediations for hard-to-detect vulnerabilities, comparable to these within the high ten Open Worldwide Software Safety Undertaking (OWASP), people who don’t meet crypto library greatest practices, and others. To assist builders code responsibly, CodeWhisperer filters out code options that is perhaps thought-about biased or unfair, and CodeWhisperer is the one coding companion that may filter and flag code options that resemble open supply code that prospects might wish to reference or license to be used.

We all know generative AI goes to vary the sport for builders, and we would like it to be helpful to as many as attainable. This is the reason CodeWhisperer is free for all particular person customers with no {qualifications} or deadlines for producing code! Anybody can join CodeWhisperer with simply an electronic mail account and grow to be extra productive inside minutes. You don’t even need to have an AWS account. For enterprise customers, we’re providing a CodeWhisperer Skilled Tier that features administration options like single sign-on (SSO) with AWS Identification and Entry Administration (IAM) integration, in addition to greater limits on safety scanning.

Constructing highly effective purposes like CodeWhisperer is transformative for builders and all our prospects. We now have much more coming, and we’re enthusiastic about what you’ll construct with generative AI on AWS. Our mission is to make it attainable for builders of all ability ranges and for organizations of all sizes to innovate utilizing generative AI. That is only the start of what we imagine would be the subsequent wave of ML powering new potentialities for you.

Assets

Take a look at the next sources to be taught extra about generative AI on AWS and these bulletins:

Concerning the creator

Swami Sivasubramanian is Vice President of Information and Machine Studying at AWS. On this position, Swami oversees all AWS Database, Analytics, and AI & Machine Studying providers. His workforce’s mission is to assist organizations put their knowledge to work with a whole, end-to-end knowledge answer to retailer, entry, analyze, and visualize, and predict.

Swami Sivasubramanian is Vice President of Information and Machine Studying at AWS. On this position, Swami oversees all AWS Database, Analytics, and AI & Machine Studying providers. His workforce’s mission is to assist organizations put their knowledge to work with a whole, end-to-end knowledge answer to retailer, entry, analyze, and visualize, and predict.