That is joint put up co-written by Leidos and AWS. Leidos is a FORTUNE 500 science and expertise options chief working to handle among the world’s hardest challenges within the protection, intelligence, homeland safety, civil, and healthcare markets.

Leidos has partnered with AWS to develop an method to privacy-preserving, confidential machine studying (ML) modeling the place you construct cloud-enabled, encrypted pipelines.

Homomorphic encryption is a brand new method to encryption that enables computations and analytical capabilities to be run on encrypted information, with out first having to decrypt it, to be able to protect privateness in instances the place you could have a coverage that states information ought to by no means be decrypted. Totally homomorphic encryption (FHE) is the strongest notion of such a method, and it lets you unlock the worth of your information the place zero-trust is essential. The core requirement is that the info wants to have the ability to be represented with numbers by means of an encoding approach, which may be utilized to numerical, textual, and image-based datasets. Information utilizing FHE is bigger in measurement, so testing should be achieved for functions that want the inference to be carried out in near-real time or with measurement limitations. It’s additionally essential to phrase all computations as linear equations.

On this put up, we present find out how to activate privacy-preserving ML predictions for essentially the most extremely regulated environments. The predictions (inference) use encrypted information and the outcomes are solely decrypted by the tip client (shopper facet).

To exhibit this, we present an instance of customizing an Amazon SageMaker Scikit-learn, open sourced, deep learning container to allow a deployed endpoint to just accept client-side encrypted inference requests. Though this instance reveals find out how to carry out this for inference operations, you possibly can lengthen the answer to coaching and different ML steps.

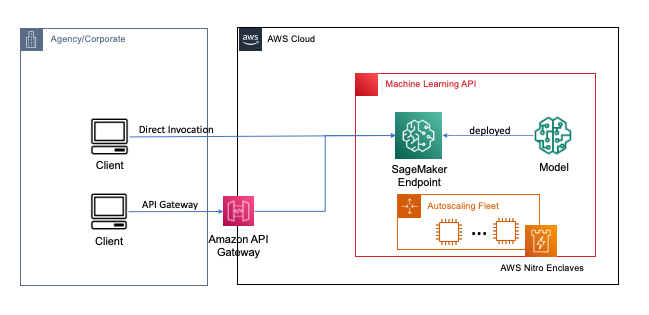

Endpoints are deployed with a pair clicks or traces of code utilizing SageMaker, which simplifies the method for builders and ML specialists to construct and prepare ML and deep studying fashions within the cloud. Fashions constructed utilizing SageMaker can then be deployed as real-time endpoints, which is important for inference workloads the place you could have actual time, regular state, low latency necessities. Functions and providers can name the deployed endpoint instantly or by means of a deployed serverless Amazon API Gateway structure. To study extra about real-time endpoint architectural greatest practices, consult with Creating a machine learning-powered REST API with Amazon API Gateway mapping templates and Amazon SageMaker. The next determine reveals each variations of those patterns.

In each of those patterns, encryption in transit offers confidentiality as the info flows by means of the providers to carry out the inference operation. When acquired by the SageMaker endpoint, the info is usually decrypted to carry out the inference operation at runtime, and is inaccessible to any exterior code and processes. To realize further ranges of safety, FHE permits the inference operation to generate encrypted outcomes for which the outcomes may be decrypted by a trusted software or shopper.

Extra on absolutely homomorphic encryption

FHE permits methods to carry out computations on encrypted information. The ensuing computations, when decrypted, are controllably near these produced with out the encryption course of. FHE can lead to a small mathematical imprecision, much like a floating level error, attributable to noise injected into the computation. It’s managed by choosing acceptable FHE encryption parameters, which is a problem-specific, tuned parameter. For extra data, try the video How would you explain homomorphic encryption?

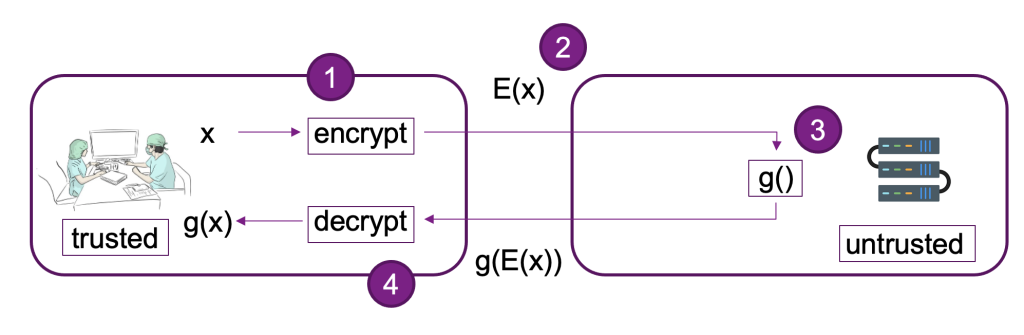

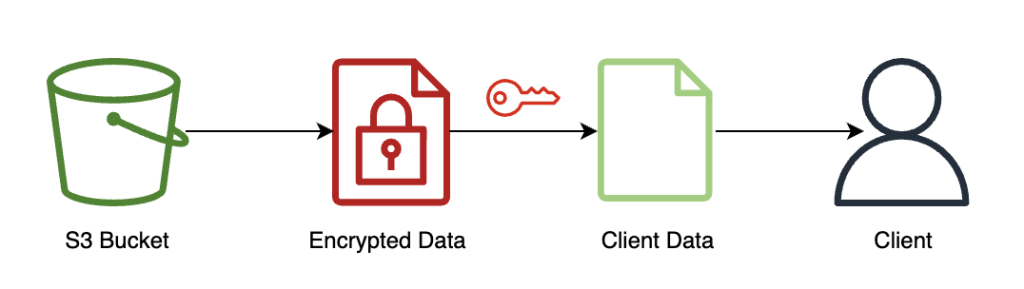

The next diagram offers an instance implementation of an FHE system.

On this system, you or your trusted shopper can do the next:

- Encrypt the info utilizing a public key FHE scheme. There are a few completely different acceptable schemes; on this instance, we’re utilizing the CKKS scheme. To study extra concerning the FHE public key encryption course of we selected, consult with CKKS explained.

- Ship client-side encrypted information to a supplier or server for processing.

- Carry out mannequin inference on encrypted information; with FHE, no decryption is required.

- Encrypted outcomes are returned to the caller after which decrypted to disclose your outcome utilizing a personal key that’s solely obtainable to you or your trusted customers throughout the shopper.

We’ve used the previous structure to arrange an instance utilizing SageMaker endpoints, Pyfhel as an FHE API wrapper simplifying the combination with ML functions, and SEAL as our underlying FHE encryption toolkit.

Answer overview

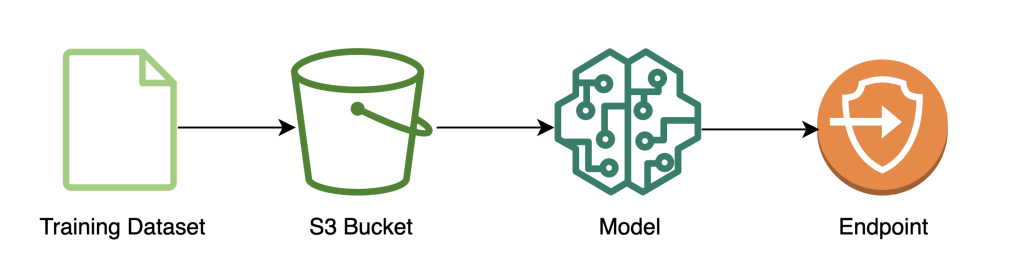

We’ve constructed out an instance of a scalable FHE pipeline in AWS utilizing an SKLearn logistic regression deep studying container with the Iris dataset. We carry out information exploration and have engineering utilizing a SageMaker pocket book, after which carry out mannequin coaching utilizing a SageMaker training job. The ensuing mannequin is deployed to a SageMaker real-time endpoint to be used by shopper providers, as proven within the following diagram.

On this structure, solely the shopper software sees unencrypted information. The info processed by means of the mannequin for inferencing stays encrypted all through its lifecycle, even at runtime throughout the processor within the remoted AWS Nitro Enclave. Within the following sections, we stroll by means of the code to construct this pipeline.

Stipulations

To comply with alongside, we assume you could have launched a SageMaker notebook with an AWS Identity and Access Management (IAM) position with the AmazonSageMakerFullAccess managed coverage.

Prepare the mannequin

The next diagram illustrates the mannequin coaching workflow.

The next code reveals how we first put together the info for coaching utilizing SageMaker notebooks by pulling in our coaching dataset, performing the mandatory cleansing operations, after which importing the info to an Amazon Simple Storage Service (Amazon S3) bucket. At this stage, you might also must do further function engineering of your dataset or combine with completely different offline function shops.

On this instance, we’re utilizing script-mode on a natively supported framework inside SageMaker (scikit-learn), the place we instantiate our default SageMaker SKLearn estimator with a customized coaching script to deal with the encrypted information throughout inference. To see extra details about natively supported frameworks and script mode, consult with Use Machine Learning Frameworks, Python, and R with Amazon SageMaker.

Lastly, we prepare our mannequin on the dataset and deploy our educated mannequin to the occasion kind of our selection.

At this level, we’ve educated a customized SKLearn FHE mannequin and deployed it to a SageMaker real-time inference endpoint that’s prepared settle for encrypted information.

Encrypt and ship shopper information

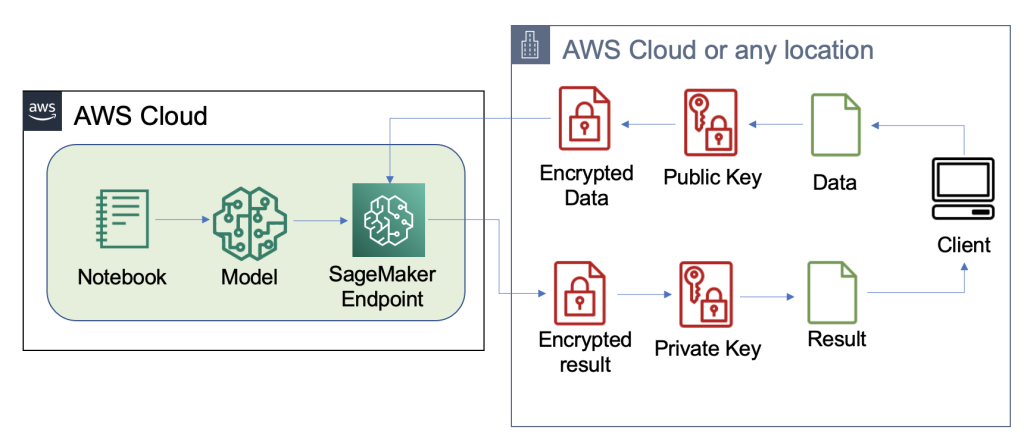

The next diagram illustrates the workflow of encrypting and sending shopper information to the mannequin.

Normally, the payload of the decision to the inference endpoint comprises the encrypted information somewhat than storing it in Amazon S3 first. We do that on this instance as a result of we’ve batched a lot of data to the inference name collectively. In follow, this batch measurement will probably be smaller or batch rework will probably be used as an alternative. Utilizing Amazon S3 as an middleman isn’t required for FHE.

Now that the inference endpoint has been arrange, we are able to begin sending information over. We usually use completely different take a look at and coaching datasets, however for this instance we use the identical coaching dataset.

First, we load the Iris dataset on the shopper facet. Subsequent, we arrange the FHE context utilizing Pyfhel. We chosen Pyfhel for this course of as a result of it’s easy to put in and work with, consists of common FHE schemas, and depends upon trusted underlying open-sourced encryption implementation SEAL. On this instance, we ship the encrypted information, together with public keys data for this FHE scheme, to the server, which permits the endpoint to encrypt the outcome to ship on its facet with the mandatory FHE parameters, however doesn’t give it the flexibility to decrypt the incoming information. The non-public key stays solely with the shopper, which has the flexibility to decrypt the outcomes.

After we encrypt our information, we put collectively a whole information dictionary—together with the related keys and encrypted information—to be saved on Amazon S3. Aferwards, the mannequin makes its predictions over the encrypted information from the shopper, as proven within the following code. Discover we don’t transmit the non-public key, so the mannequin host isn’t capable of decrypt the info. On this instance, we’re passing the info by means of as an S3 object; alternatively, that information could also be despatched on to the Sagemaker endpoint. As a real-time endpoint, the payload comprises the info parameter within the physique of the request, which is talked about within the SageMaker documentation.

The next screenshot reveals the central prediction inside fhe_train.py (the appendix reveals all the coaching script).

We’re computing the outcomes of our encrypted logistic regression. This code computes an encrypted scalar product for every attainable class and returns the outcomes to the shopper. The outcomes are the expected logits for every class throughout all examples.

Consumer returns decrypted outcomes

The next diagram illustrates the workflow of the shopper retrieving their encrypted outcome and decrypting it (with the non-public key that solely they’ve entry to) to disclose the inference outcome.

On this instance, outcomes are saved on Amazon S3, however typically this is able to be returned by means of the payload of the real-time endpoint. Utilizing Amazon S3 as an middleman isn’t required for FHE.

The inference outcome will probably be controllably near the outcomes as if they’d computed it themselves, with out utilizing FHE.

Clear up

We finish this course of by deleting the endpoint we created, to ensure there isn’t any unused compute after this course of.

Outcomes and concerns

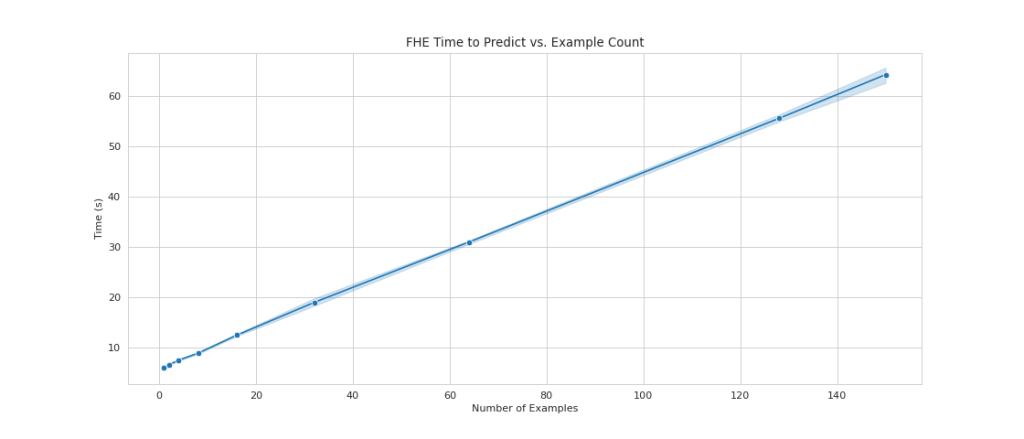

One of many widespread drawbacks of utilizing FHE on high of fashions is that it provides computational overhead, which—in follow—makes the ensuing mannequin too gradual for interactive use instances. However, in instances the place the info is very delicate, it could be worthwhile to just accept this latency trade-off. Nevertheless, for our easy logistic regression, we’re capable of course of 140 enter information samples inside 60 seconds and see linear efficiency. The next chart consists of the entire end-to-end time, together with the time carried out by the shopper to encrypt the enter and decypt the outcomes. It additionally makes use of Amazon S3, which provides latency and isn’t required for these instances.

We see linear scaling as we enhance the variety of examples from 1 to 150. That is anticipated as a result of every instance is encrypted independently from one another, so we anticipate a linear enhance in computation, with a set setup value.

This additionally means that you could scale your inference fleet horizontally for better request throughput behind your SageMaker endpoint. You should use Amazon SageMaker Inference Recommender to value optimize your fleet relying on your online business wants.

Conclusion

And there you could have it: absolutely homomorphic encryption ML for a SKLearn logistic regression mannequin that you could arrange with a couple of traces of code. With some customization, you possibly can implement this similar encryption course of for various mannequin sorts and frameworks, unbiased of the coaching information.

Should you’d wish to study extra about constructing an ML answer that makes use of homomorphic encryption, attain out to your AWS account staff or companion, Leidos, to study extra. You may as well consult with the next assets for extra examples:

The content material and opinions on this put up comprises these from third-party authors and AWS shouldn’t be chargeable for the content material or accuracy of this put up.

Appendix

The complete coaching script is as follows:

Concerning the Authors

Liv d’Aliberti is a researcher throughout the Leidos AI/ML Accelerator beneath the Workplace of Know-how. Their analysis focuses on privacy-preserving machine studying.

Manbir Gulati is a researcher throughout the Leidos AI/ML Accelerator beneath the Workplace of Know-how. His analysis focuses on the intersection of cybersecurity and rising AI threats.

Joe Kovba is a Cloud Middle of Excellence Follow Lead throughout the Leidos Digital Modernization Accelerator beneath the Workplace of Know-how. In his free time, he enjoys refereeing soccer video games and taking part in softball.

Ben Snively is a Public Sector Specialist Options Architect. He works with authorities, non-profit, and schooling clients on large information and analytical tasks, serving to them construct options utilizing AWS. In his spare time, he provides IoT sensors all through his home and runs analytics on them.

Sami Hoda is a Senior Options Architect within the Companions Consulting division masking the Worldwide Public Sector. Sami is obsessed with tasks the place equal elements design considering, innovation, and emotional intelligence can be utilized to resolve issues for and influence folks in want.